Optimizing the Fraud Identification Experience for IBM Analysts

Role: Owned research interviews and high fidelity design proposals. Collaborated on problem definition and ideation.

Team: Daniya Faisal (UX Designer), Ashley Bates (UX Designer), Prashansa Pawar (Content Designer), program and offering leadership across IBM Cloud.

Timeline: July 2020 - August 2020

Context

I worked on an onboarding project at IBM to kickoff a new service in IBM Cloud. IBM Cloud is at the center of IBM’s overall strategy and is a rising competitor in the $620 billion-dollar global cloud industry. Woah…lots of 0s in that number.

One of IBM Cloud’s key differentiators is security and compliance helping IBM be secure to the core. IBM Fraud Analysts work around the clock to identify and close accounts committing fraudulent activity such as bitcoin mining, identity theft, dark web hosting, illegal activity, and shoe botting.

Analysts use a minimum of 6 prorated third party tools to aid in identifying, analyzing, and closing analyzing fraud trends, including:

How can IBM Fraud Analysts better monitor and identify trends for global fraud and receive updates on new and existing trends to address fraudulent accounts on the IBM Cloud?

Our challenge

The ask

The cloud team asked that we delivered:

A visionary concept for a fraud trend tracking experience for fraud analysts.

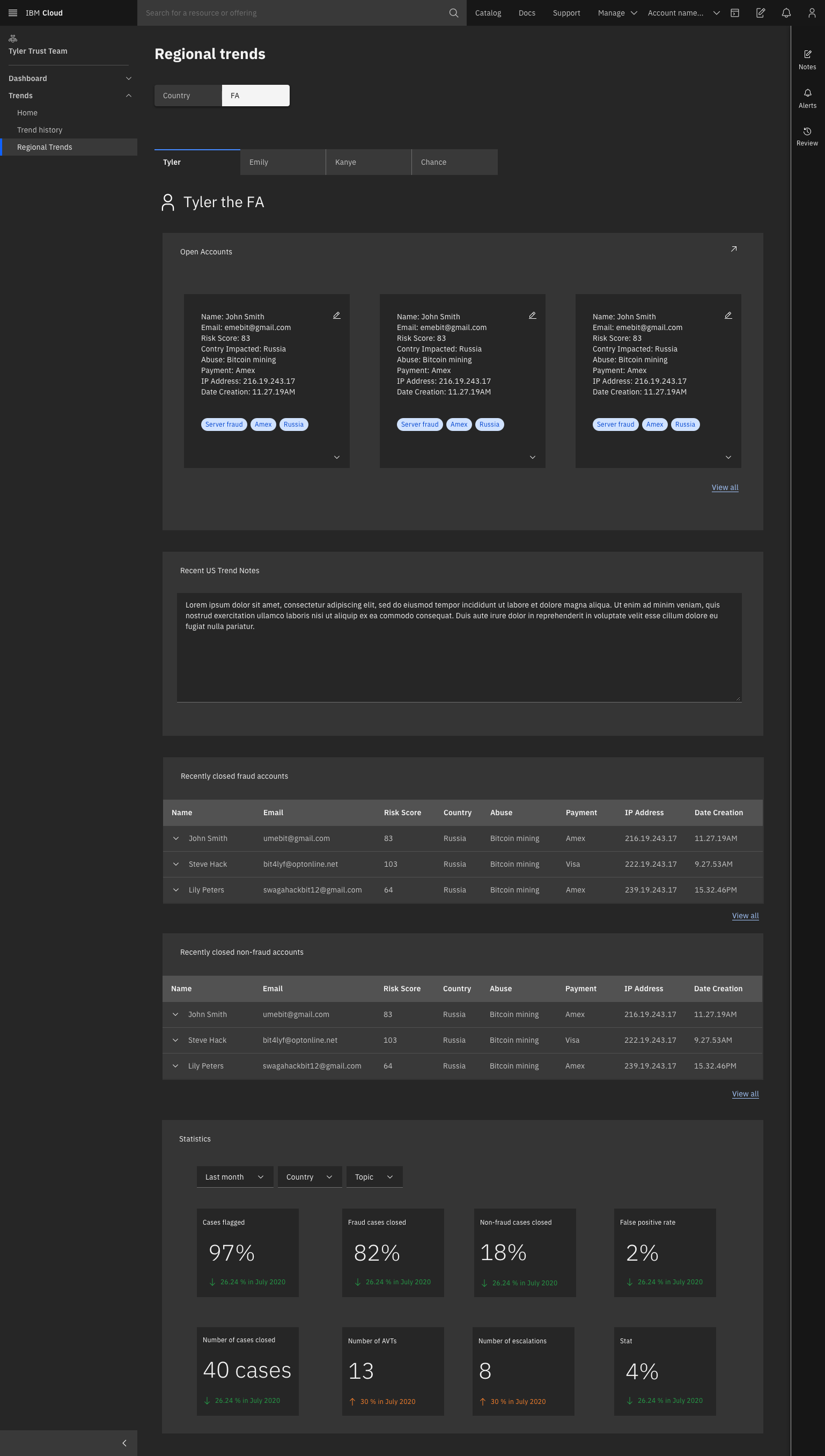

An experienced-based roadmap for how we can get there from MVP to birthday cake to ultimately the wedding cake.

In order to better understand our design challenge, here’s a narrative that we created to exemplify the issues at hand.

Larry just started his job at IBM. During Larry’s first month, IBM announced a partnership with Yeezys to collaborate on a IBM Yeezys shoe.

Larry heard about the new collab and was so excited to get himself a pair.

Larry received an internal news email that the shoe had finally launched! He clicked on the link, landed on the shoe item page and looked for the purchase button, but was upset to find a bolded ‘Sorry - sold out’ message.

It turns out that James the shoebotter purchased all of the new IBM Yeezy shoes with the intention of upselling them. James had a track history of fraud and used one of his multiple fake accounts to purchase the shoes.

So...what if there was a way to catch James before he bought all of the new IBM Yeezys so that people like Larry had a chance to buy the new, cool IBM swag?

Note - this is a fake scenario and IBM has no association or partnership with Yeezys.

Discovery and research

The interviews

We set up two user interviews with fraud analysts subject matter experts to build up our knowledge domain around fraud tracking. We then completed a a questions and assumptions activity to identify our knowledge gaps, which guided our research goals:

How do fraud analysts stay updated on trends?

What does the work flow look like when trying to monitor and identify trends?

How do fraud analysts share, communicate and keep track of trends?

What difficulties do analysts face when keeping track of trends?

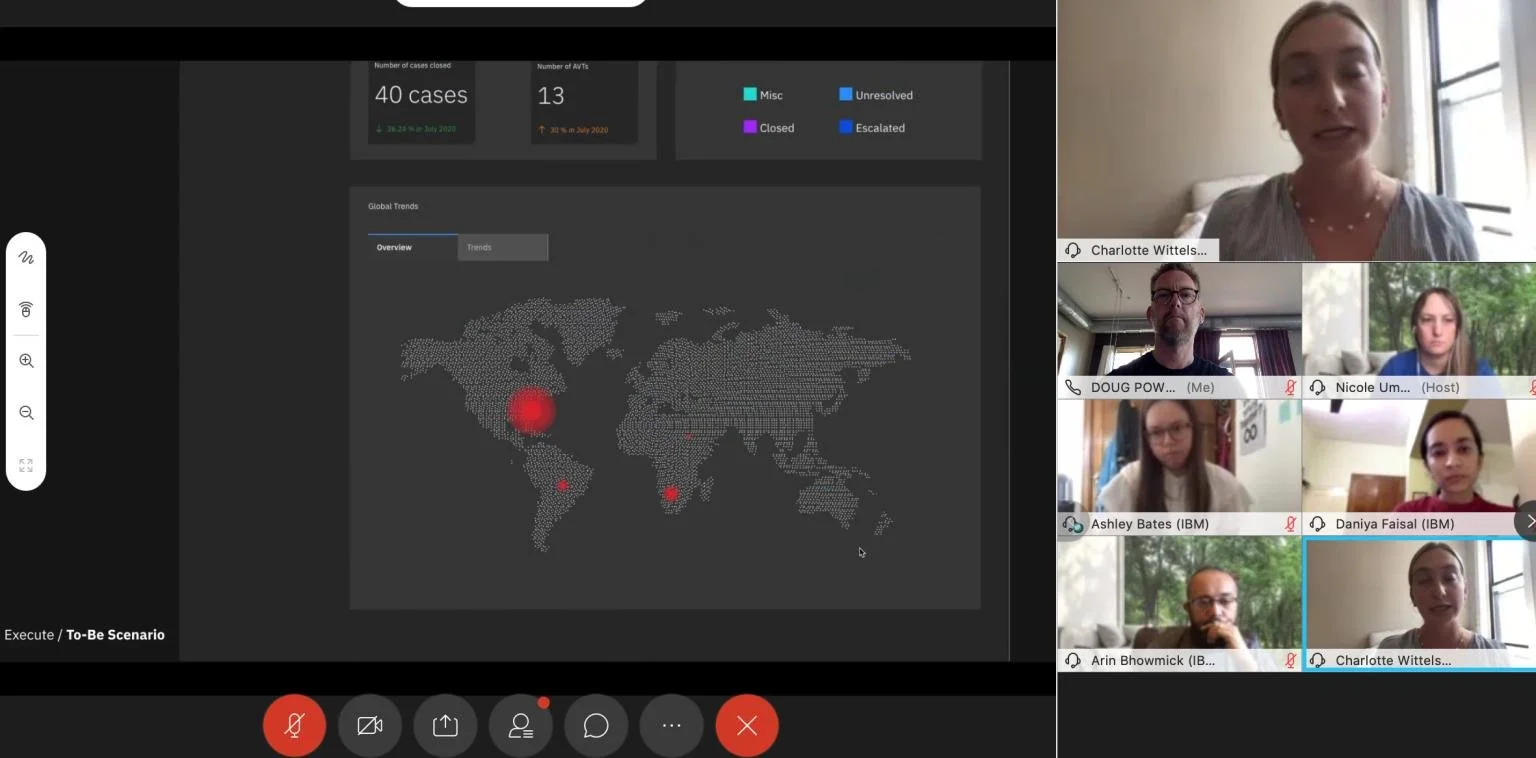

Afterwards, I led three contextual interviews to deeply observe a fraud analysts day-to-day tasks and experiences so we could better understand our users’ needs and pain points.

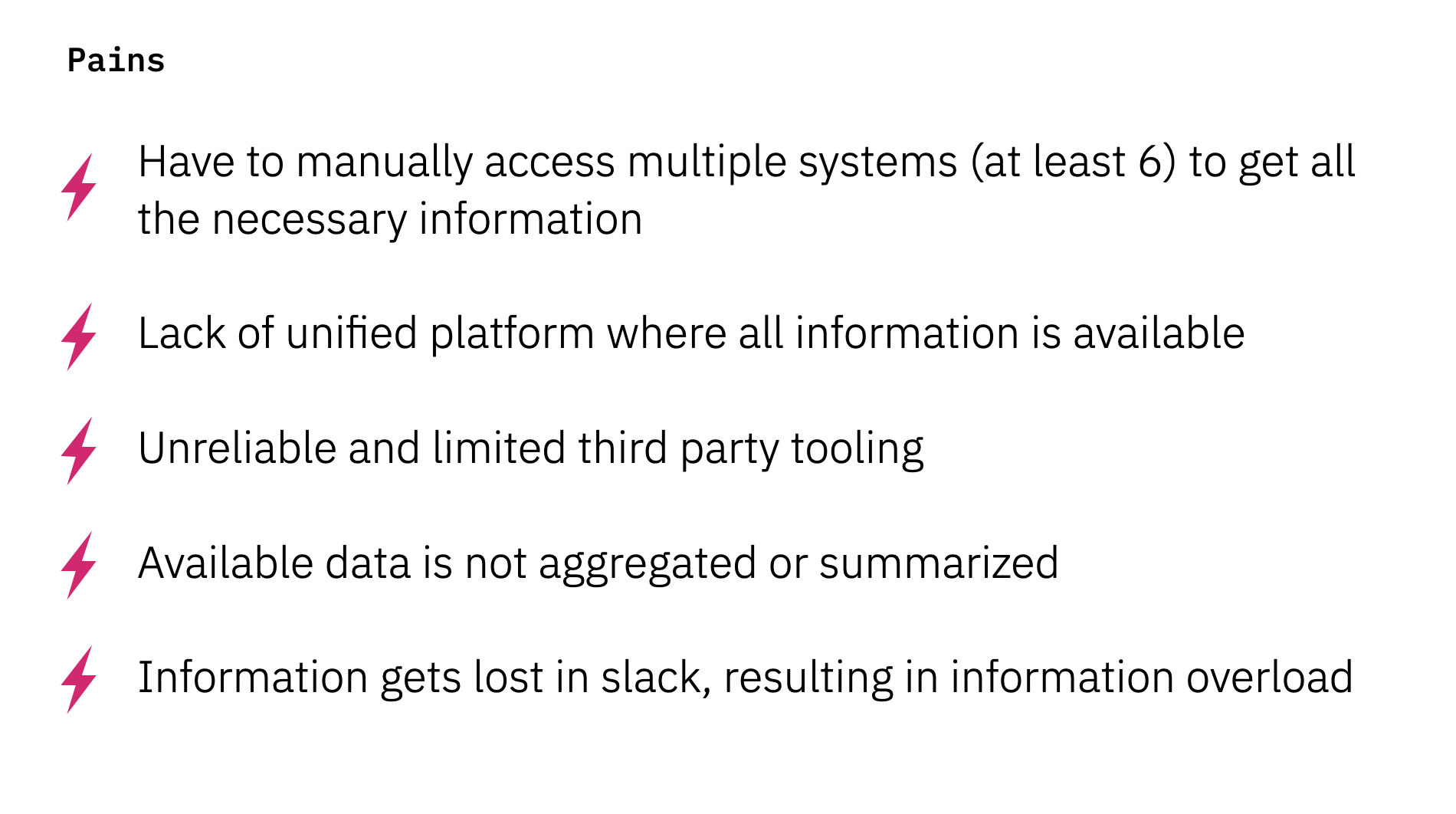

Findings:

Communication is only through slack, which can be overwhelming and lead them to miss information.

No way to view concise summary of current or past trends or global patterns.

Unable to notify other teams of trend development. Trend tracking is currently reactive rather than proactive.

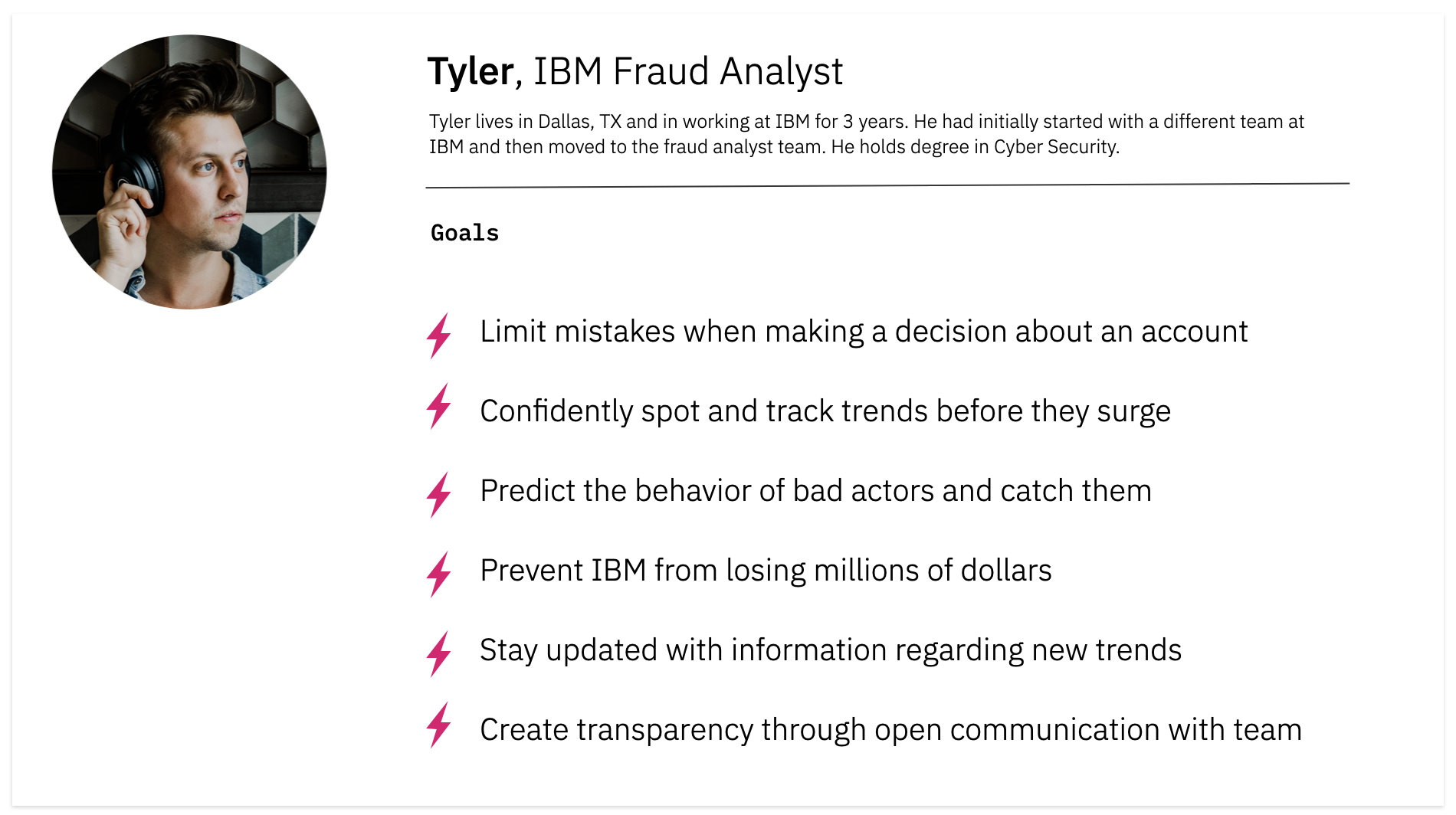

The user

With a focus on user outcomes, we translated our research findings into a user persona and identified our persona’s main goals.

The current experience

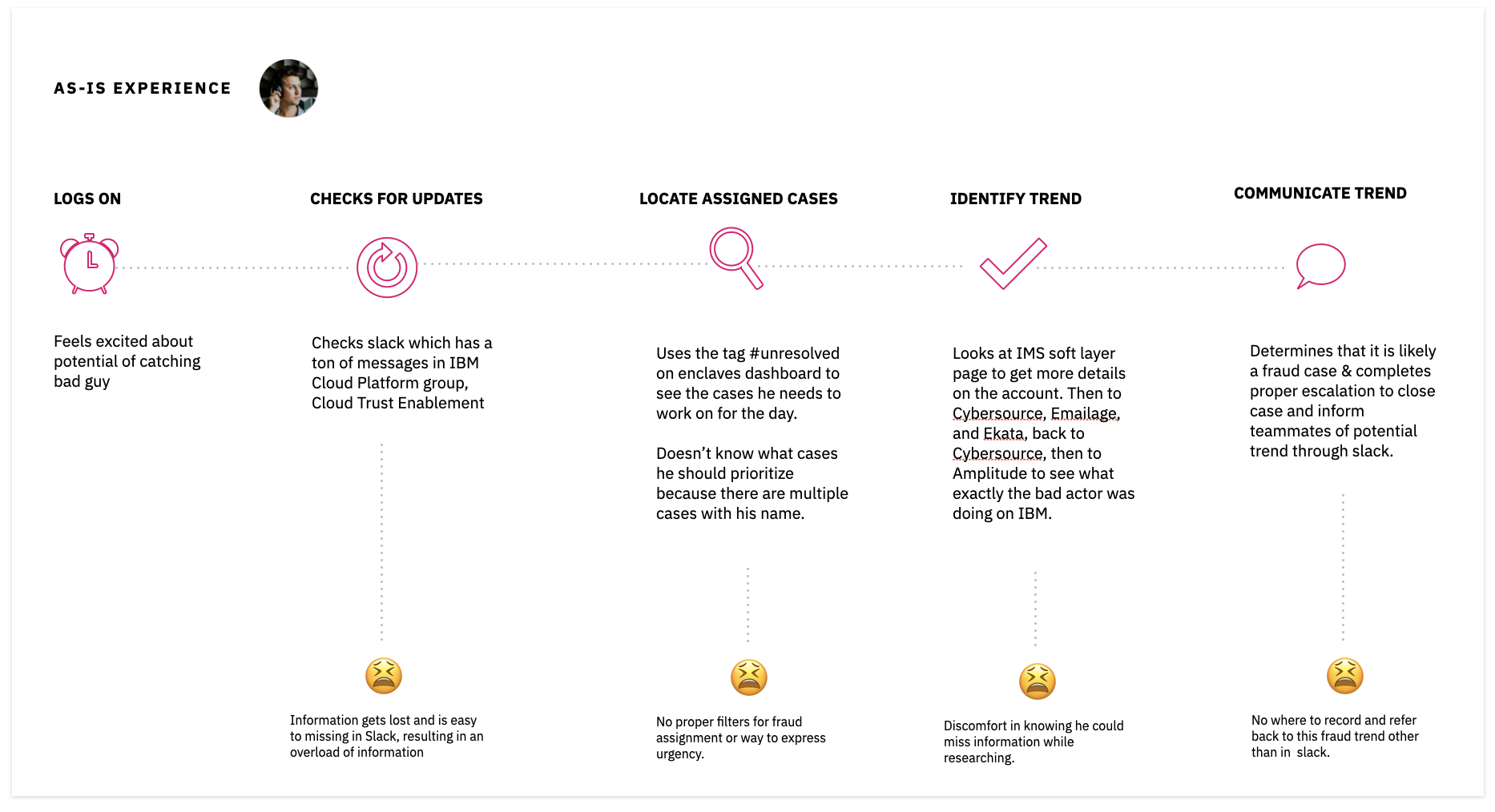

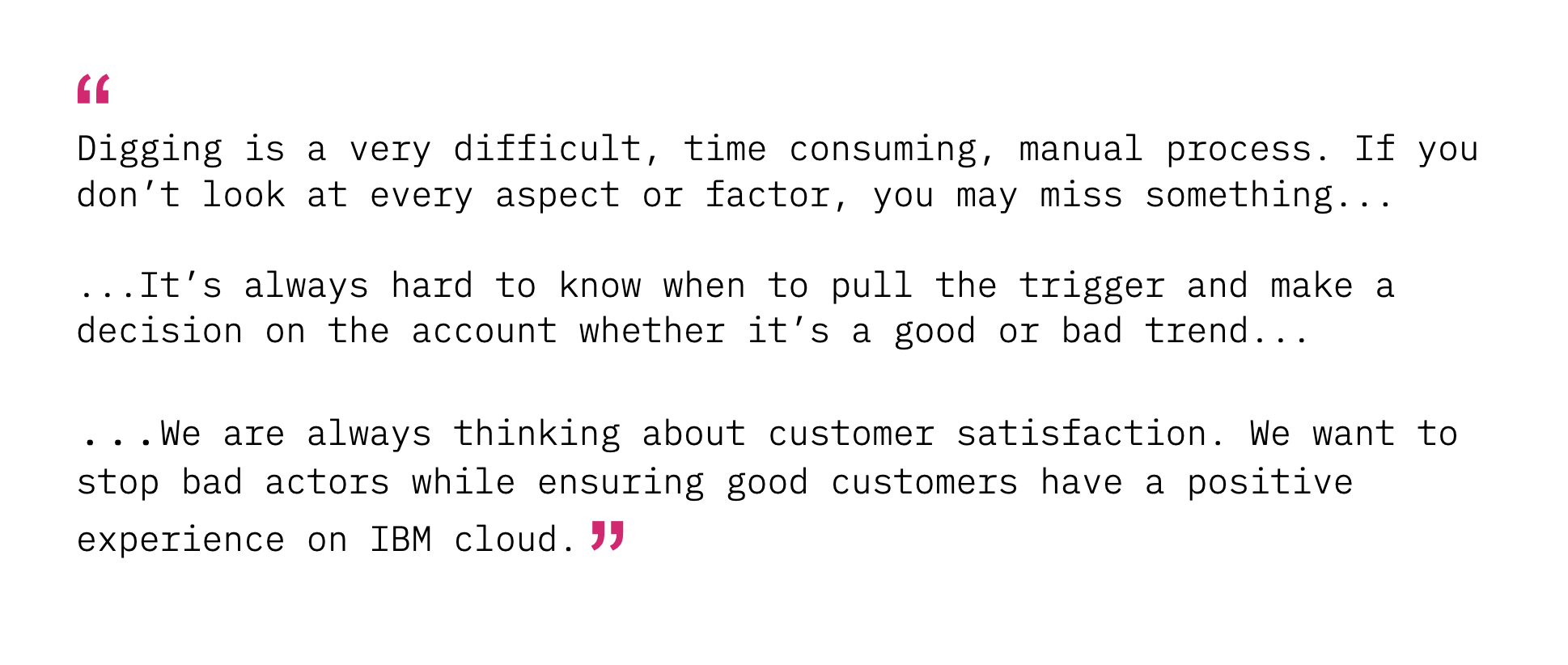

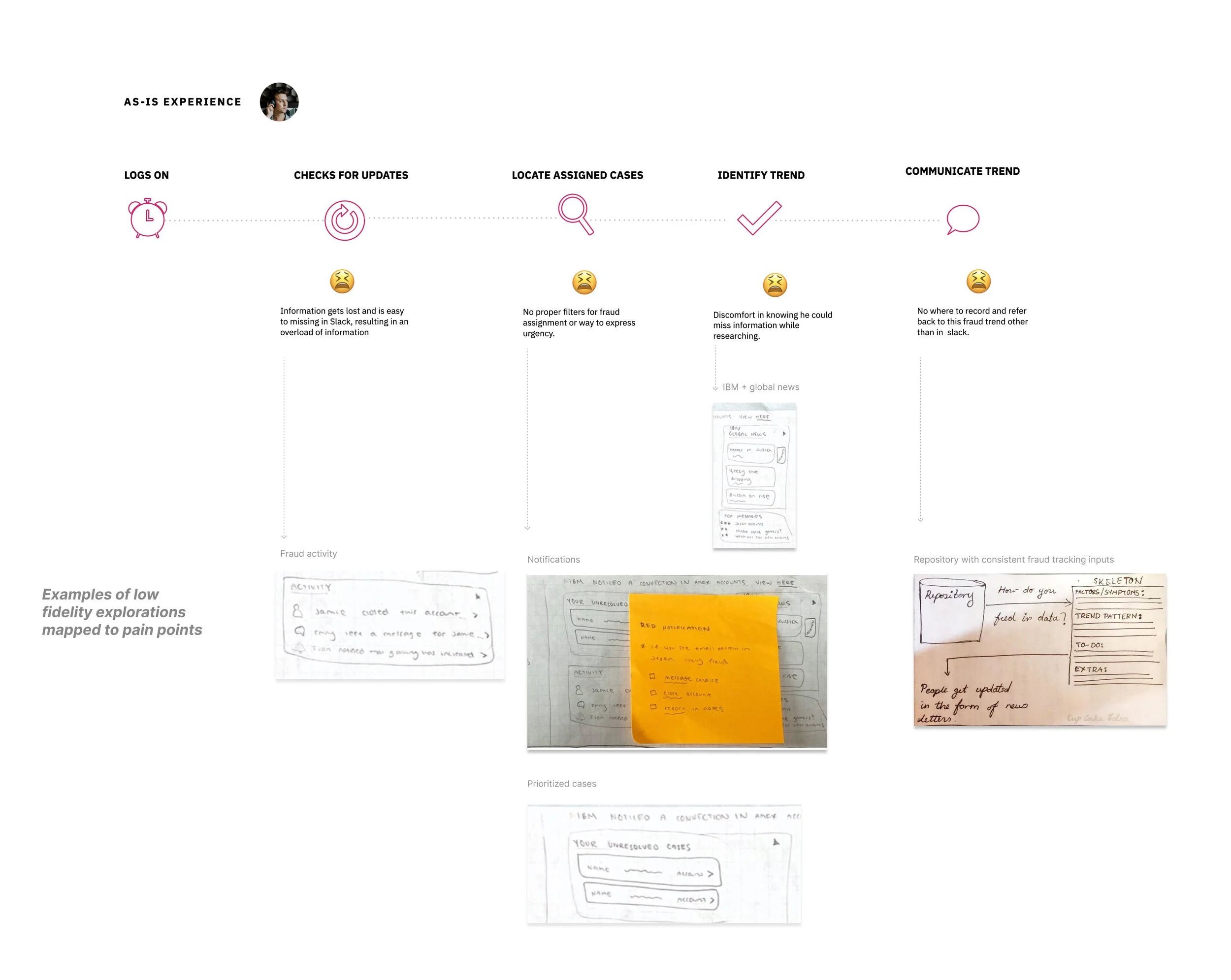

We then created an as-is scenario map to align on how fraud analysts’ currently find, track, and communicate trends. This visual reference allowed us to identify pain points to narrow down the scope of our ask into hills.

The quotes

Hills

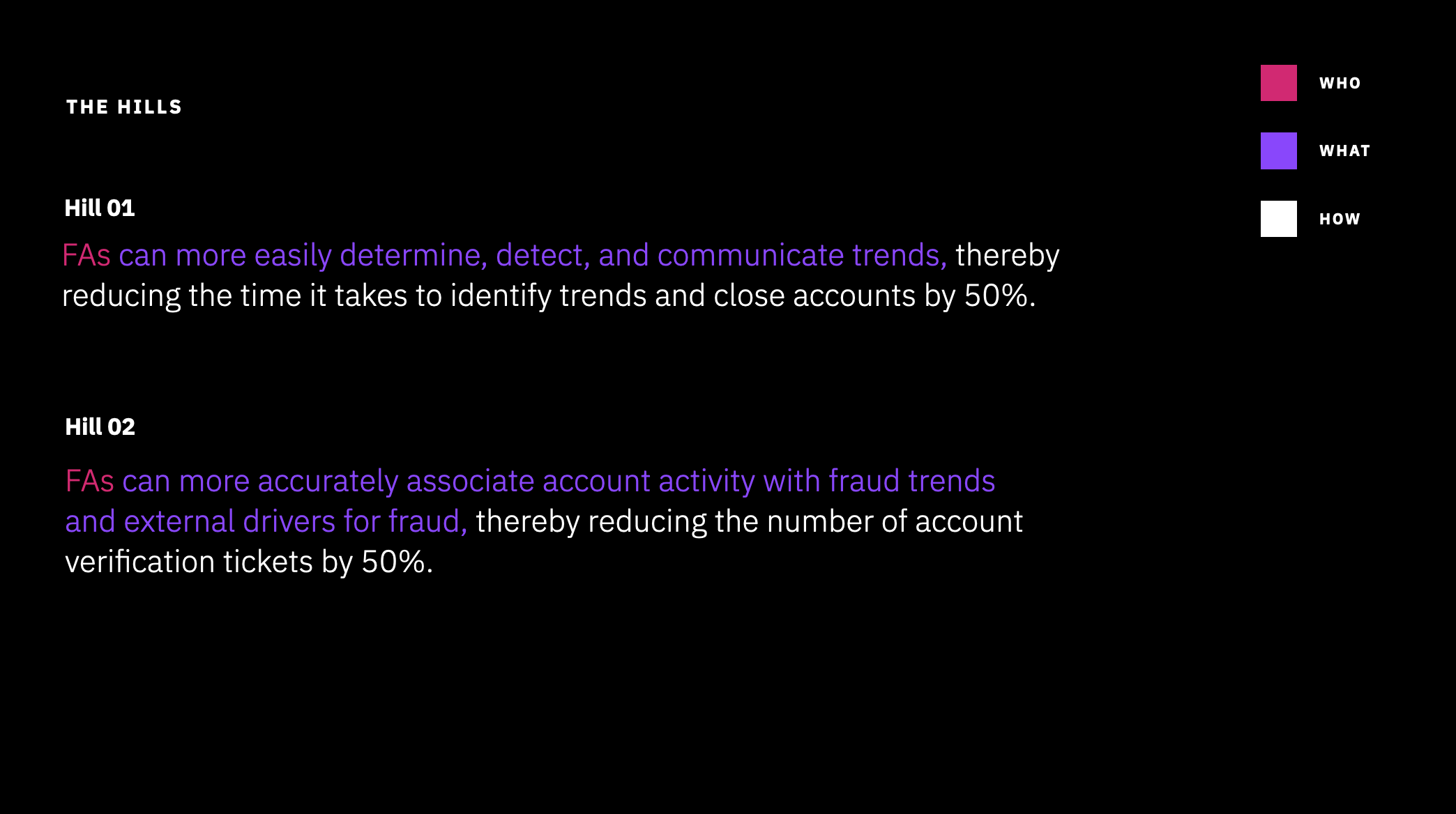

As this was a short term incubator project, we wanted to leave our team with clear KPIs to tangibly measure if our design proposals improved the Fraud Analysts’ experience and how that would simultaneously bring value to IBM when implemented.

Hills are user need statement tied to a measurable outcome. We leveraged hills to communicate our project intent and went through multiple iterations after ongoing discussions with our PM and development stakeholders. These hills guided our design.

Ideation

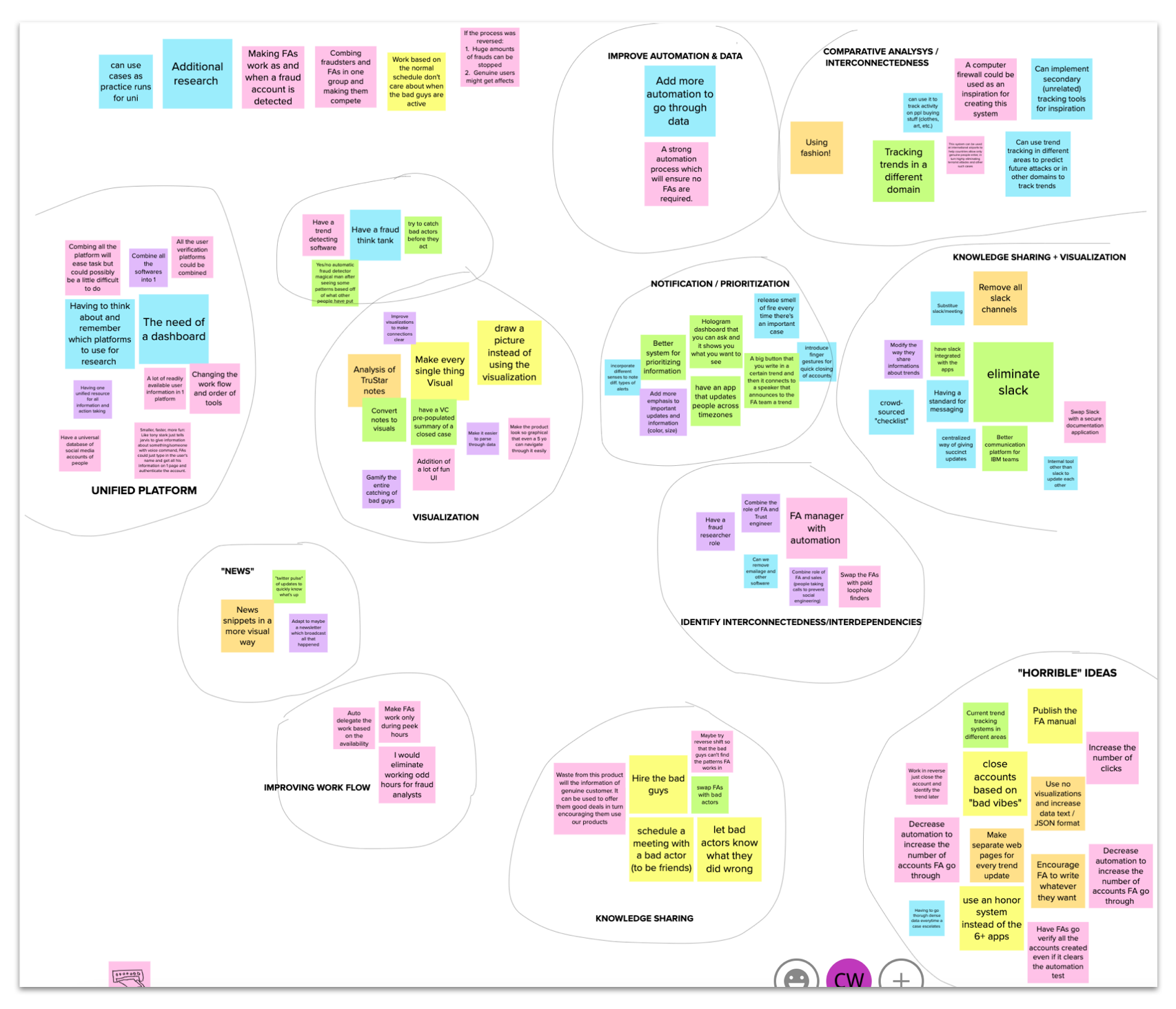

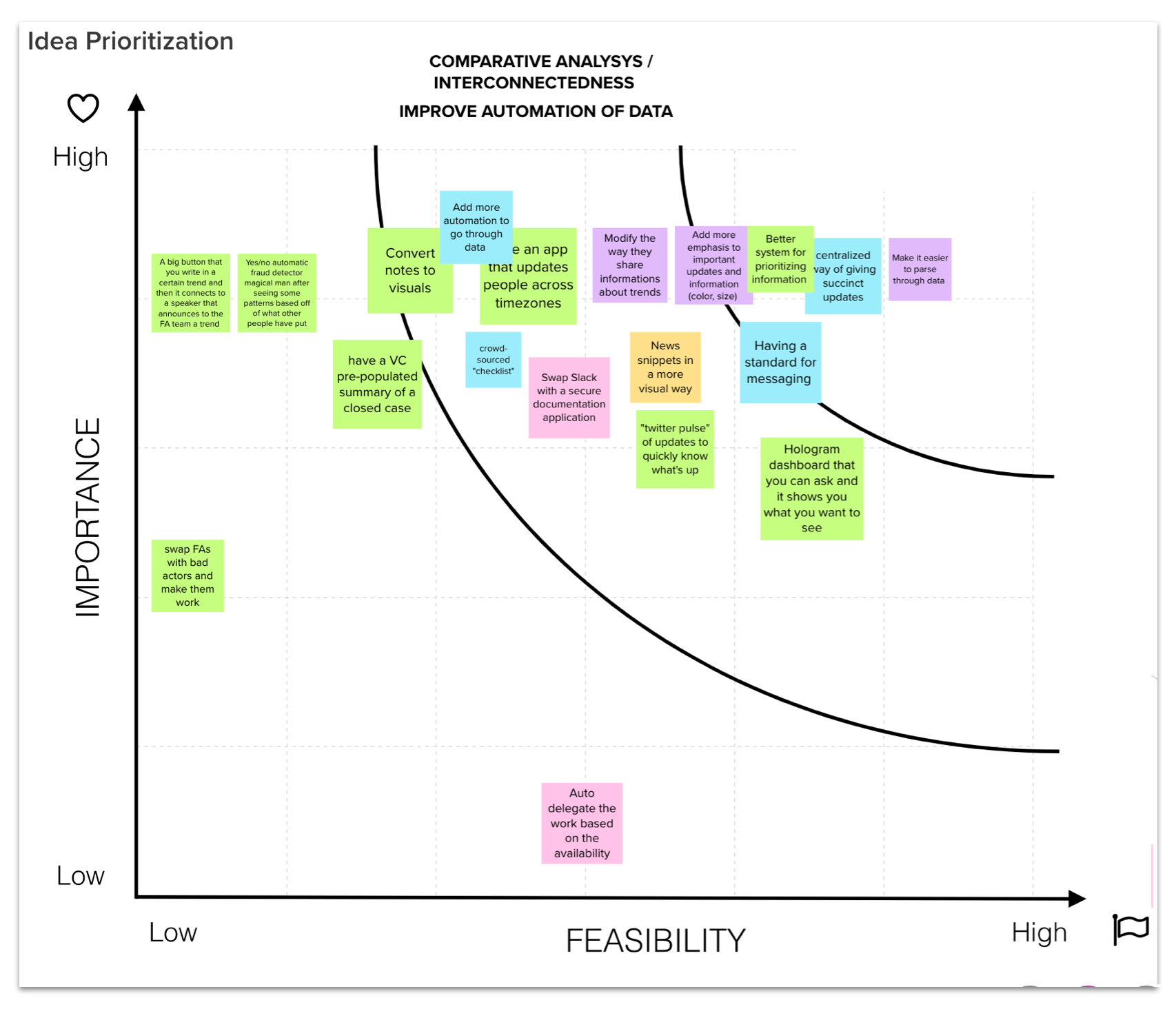

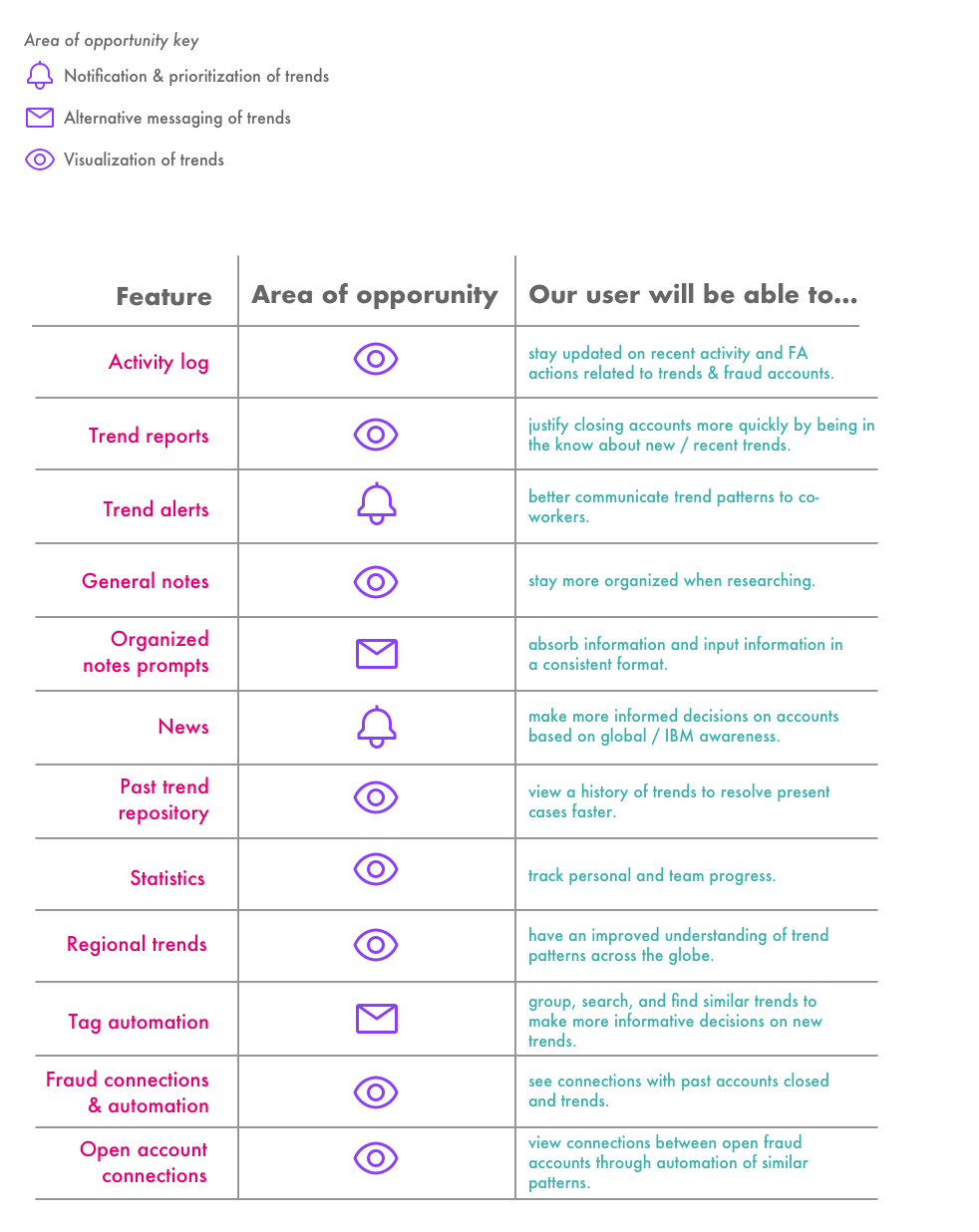

After completing our research, we moved on to the ideation phase to brainstorm design concepts. We used an affinity diagram to group common ideas and were able to establish three main areas of opportunities that informed our design explorations. We also completed a prioritization grid with our stakeholders to narrow down our ideas based on user needs, feasibility, and priority,

Designing the concepts

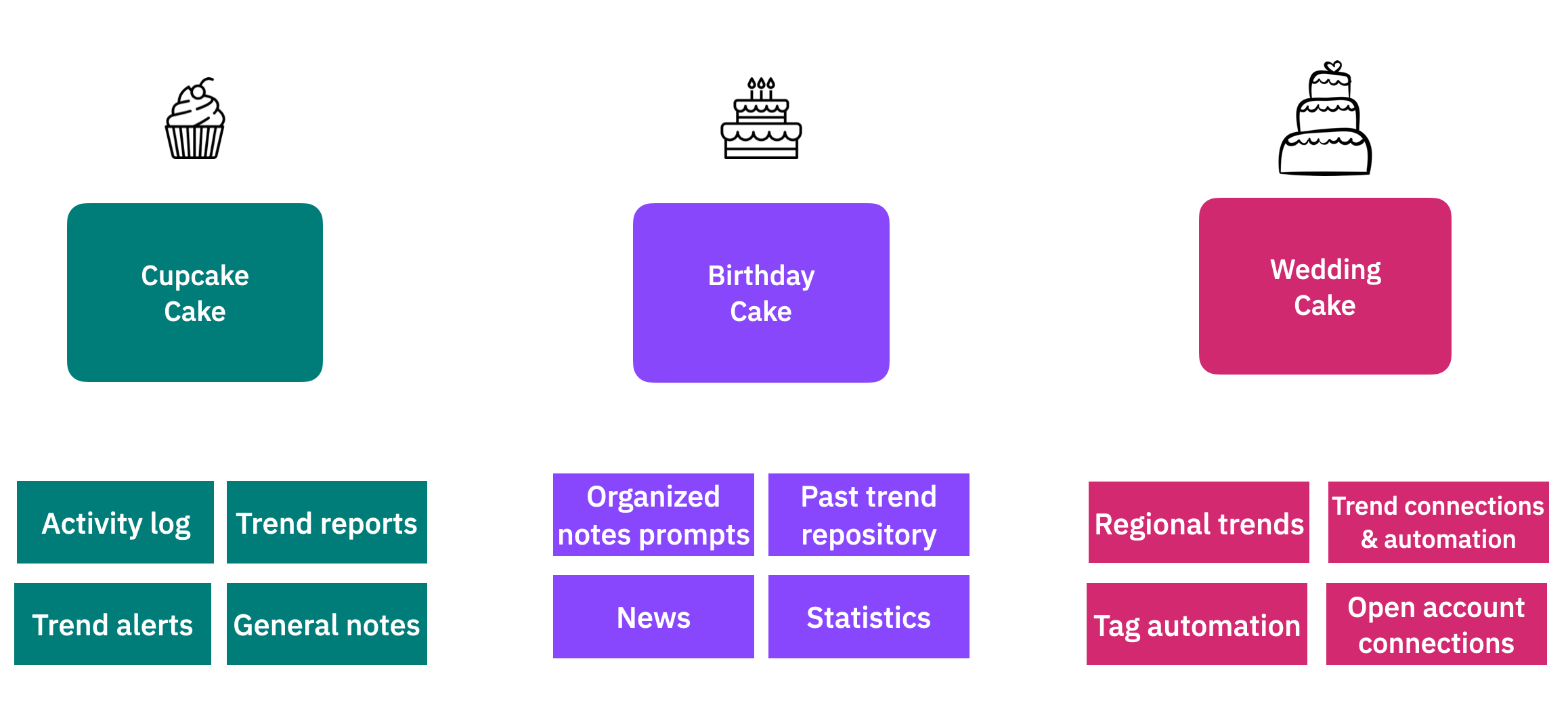

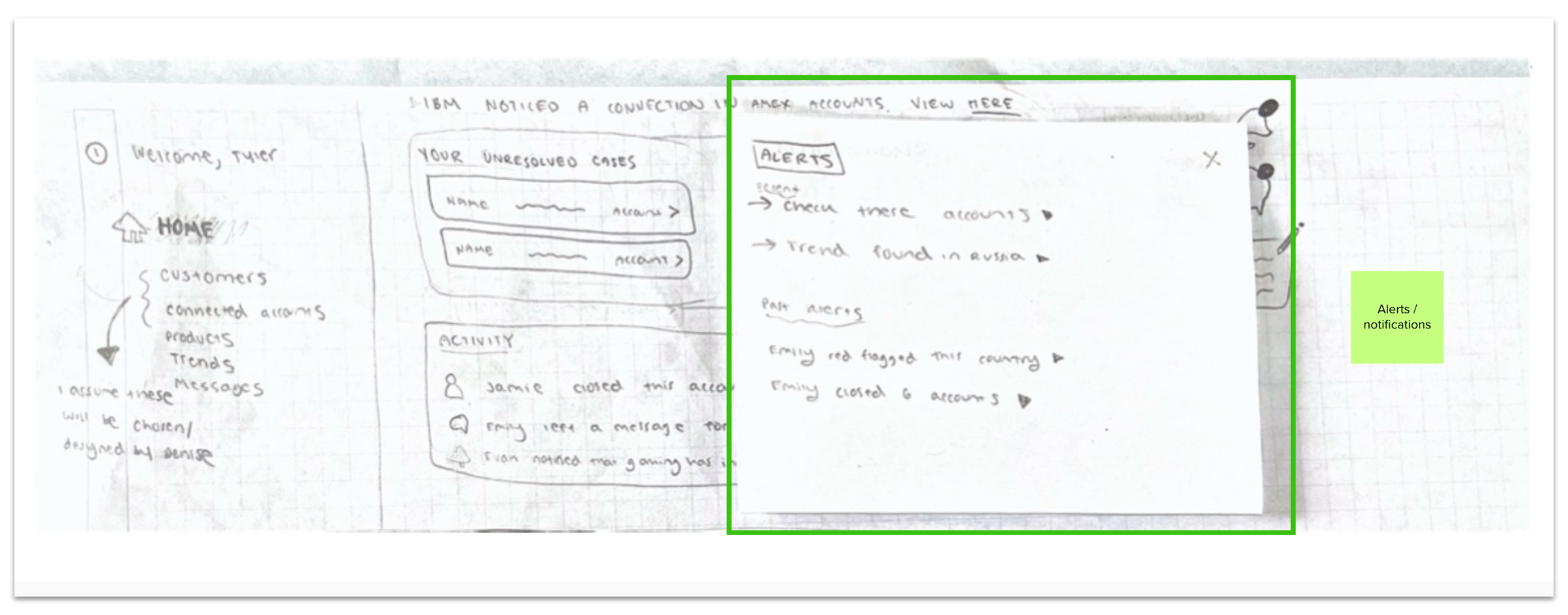

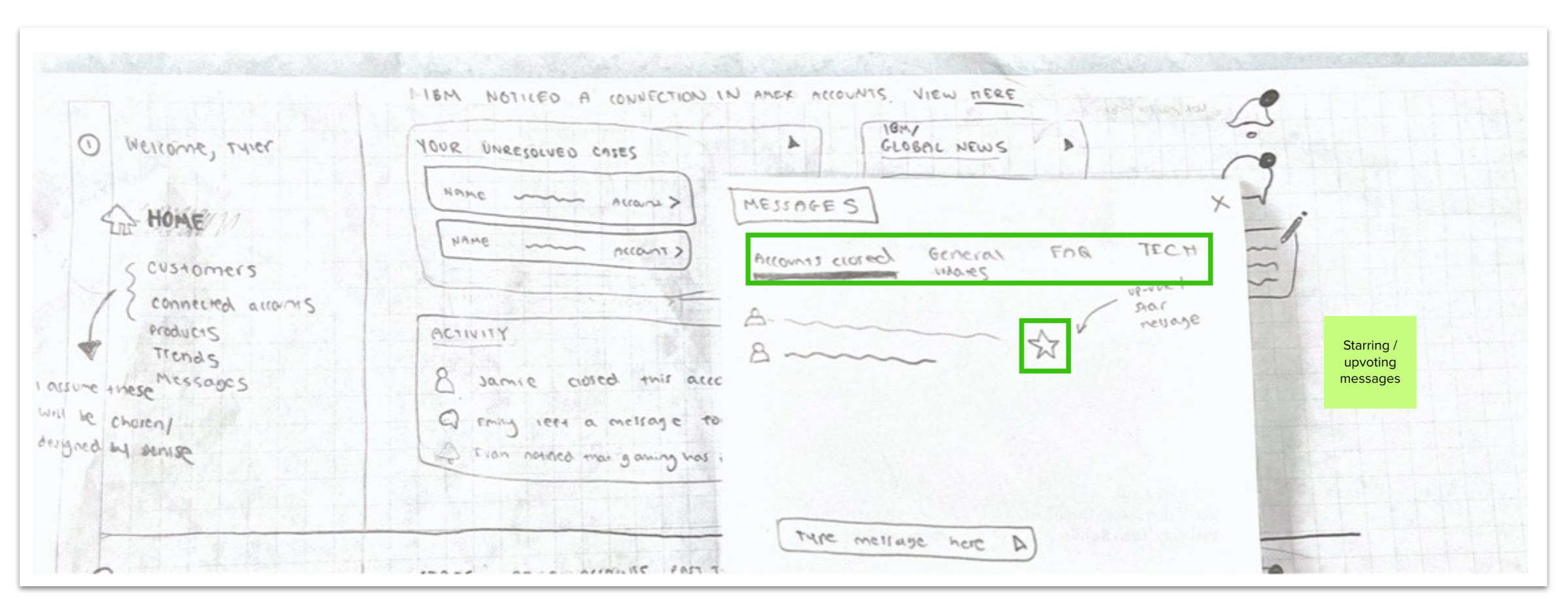

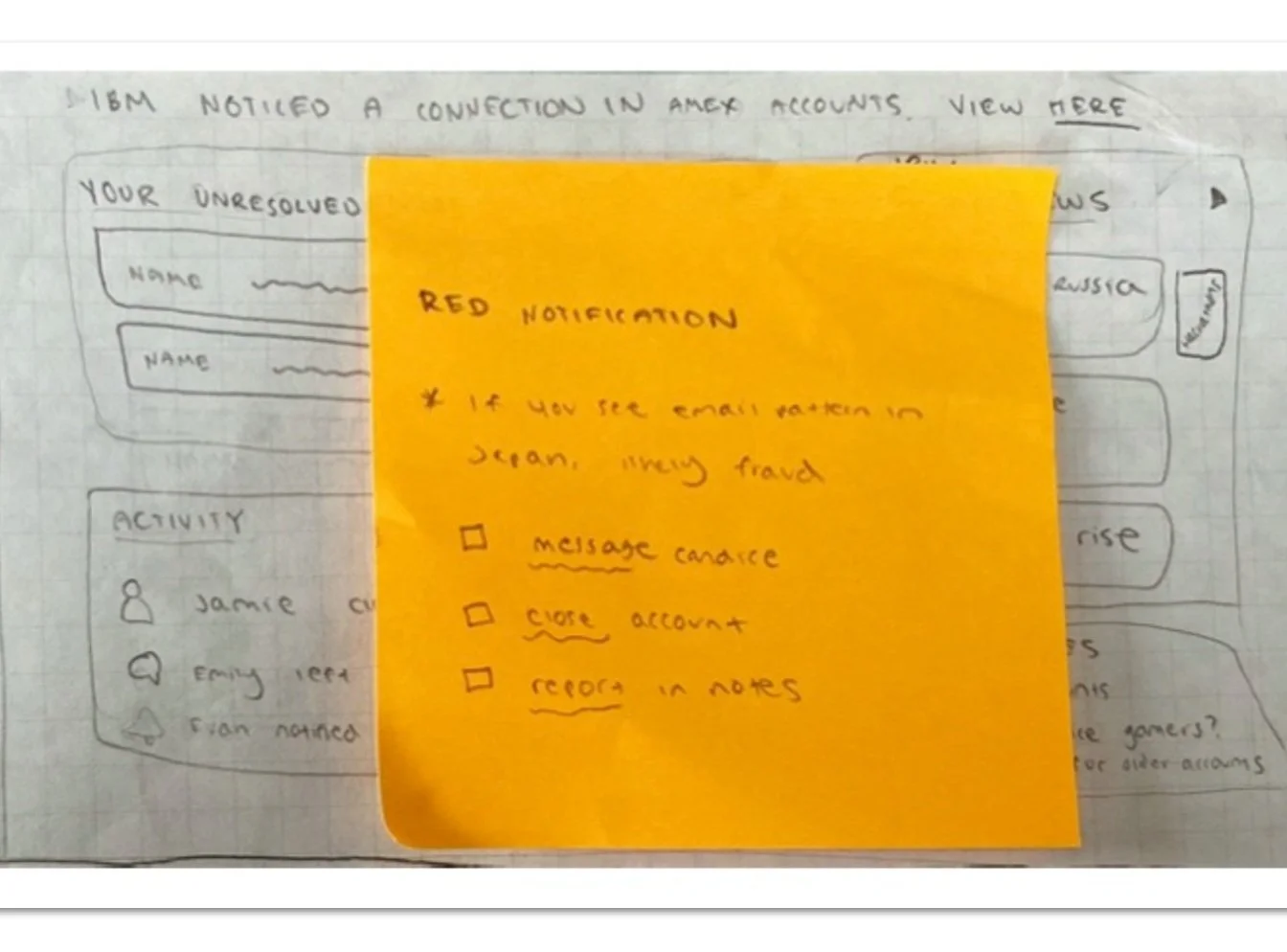

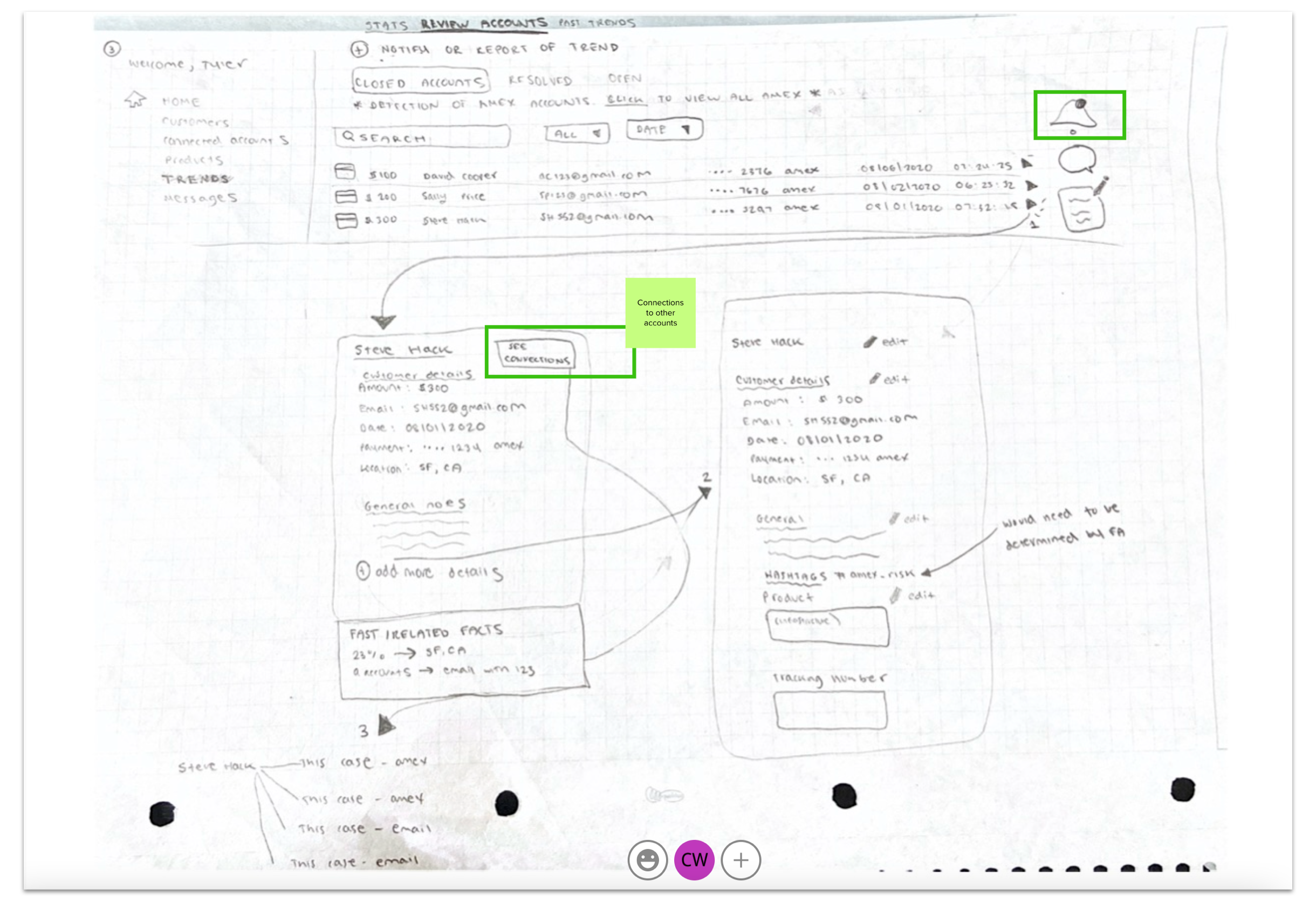

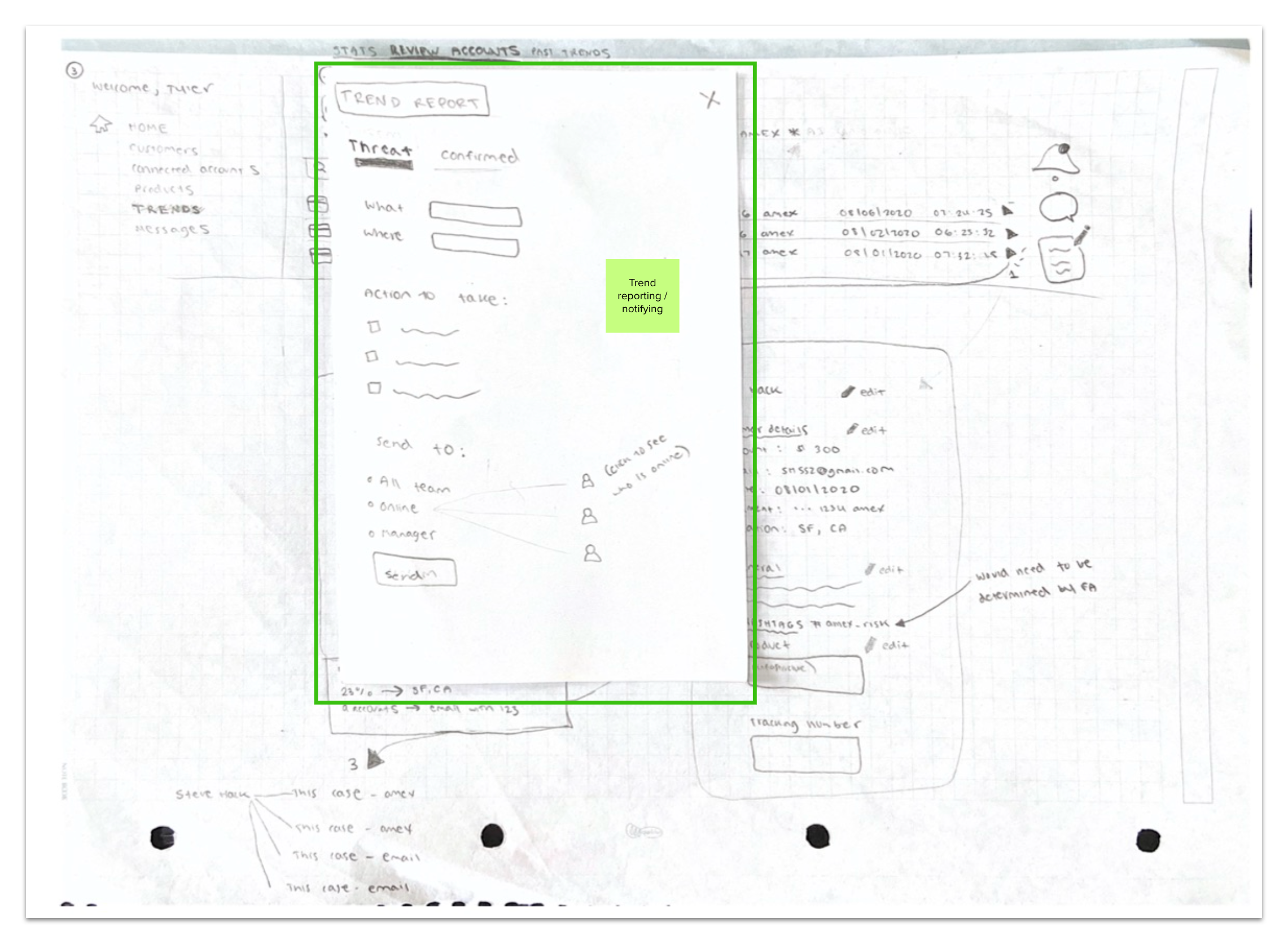

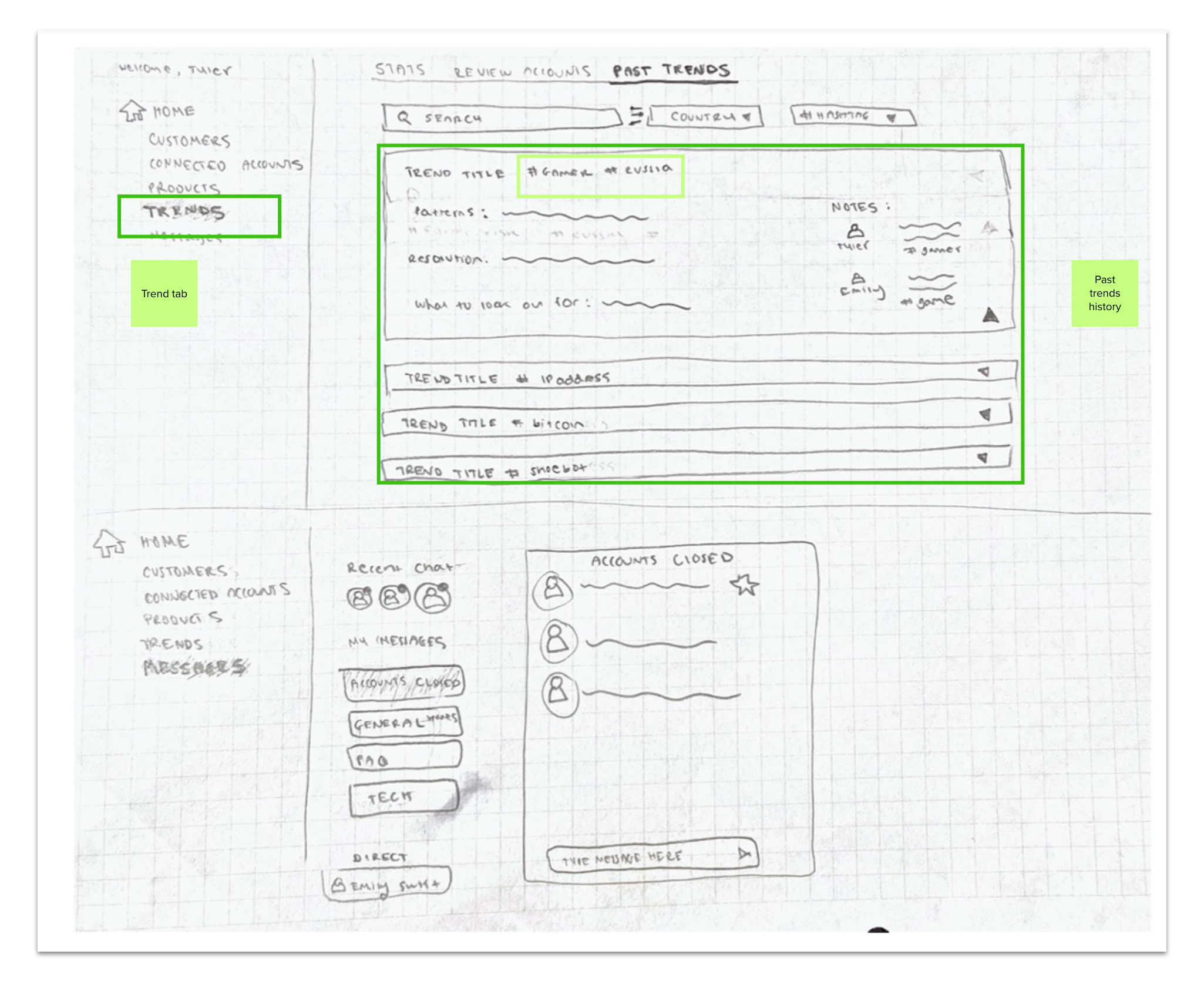

Low fidelity designs

We each sketched low fidelity designs of content to show on a unified dashboard experience. We also explored how to display fraud trend data visually.

Mapping low fidelity designs to painpoints

Mid-fidelity designs

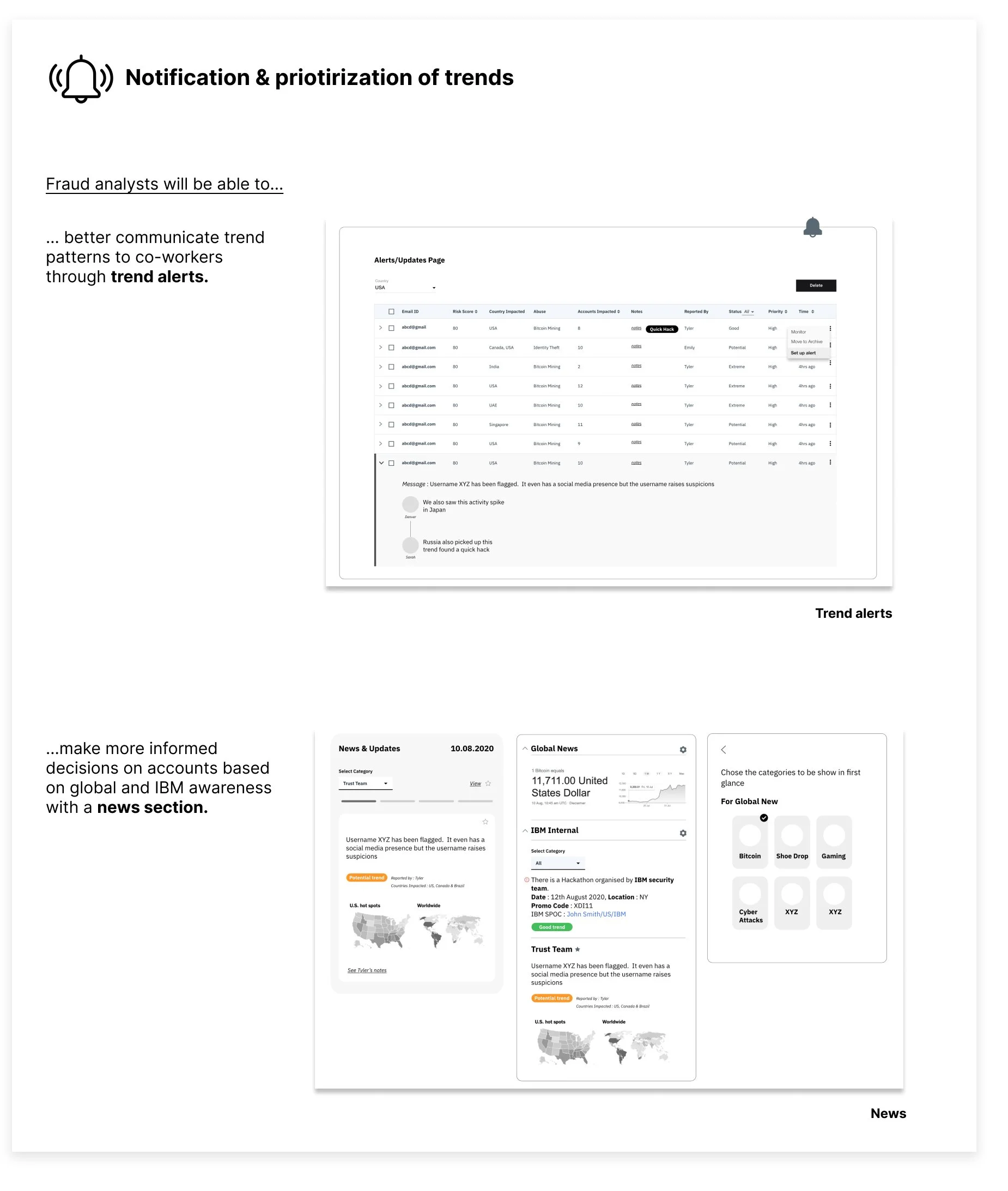

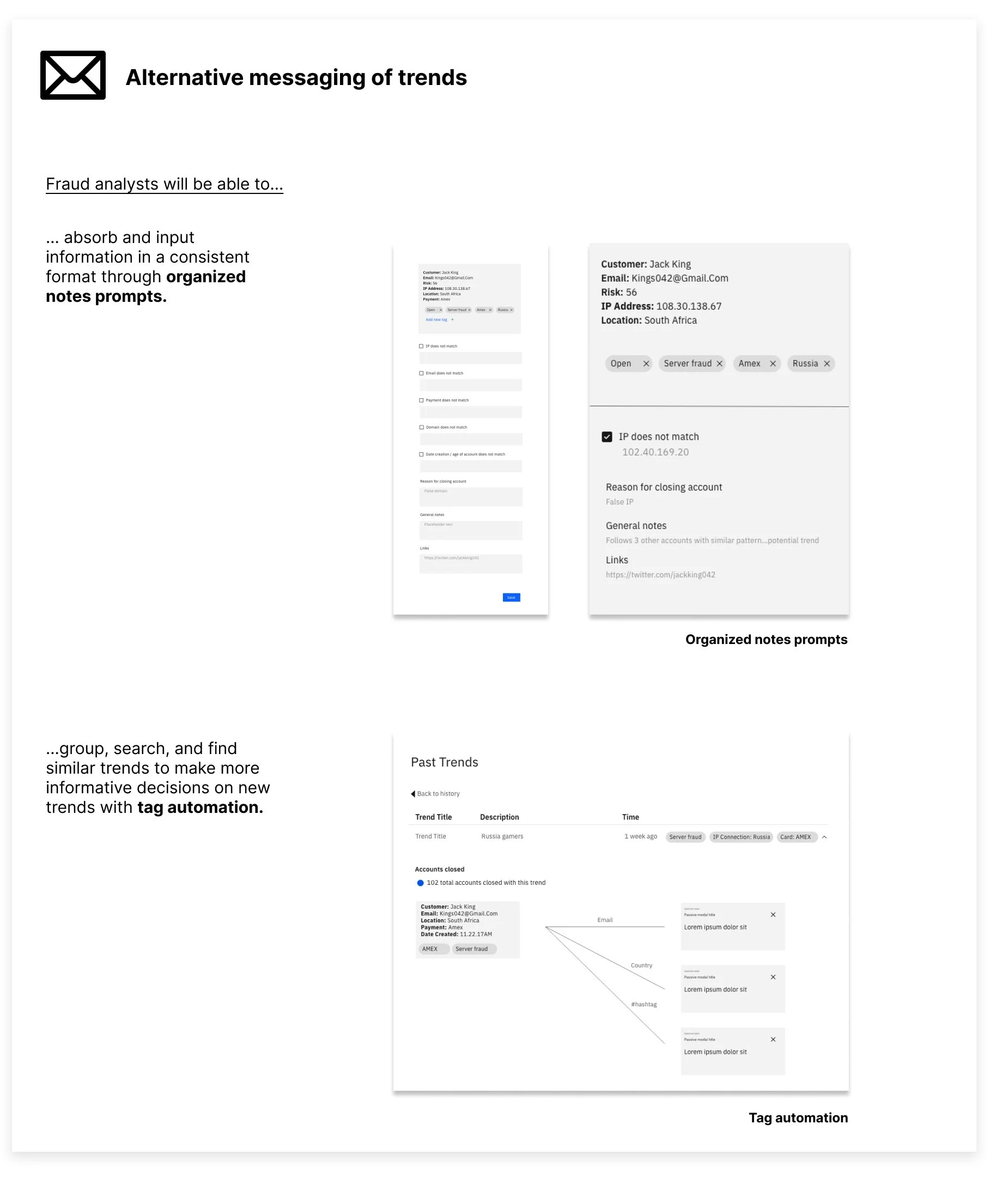

We reviewed and consolidated the low fidelity concepts ideas and each explored one concept at mid-fidelity.

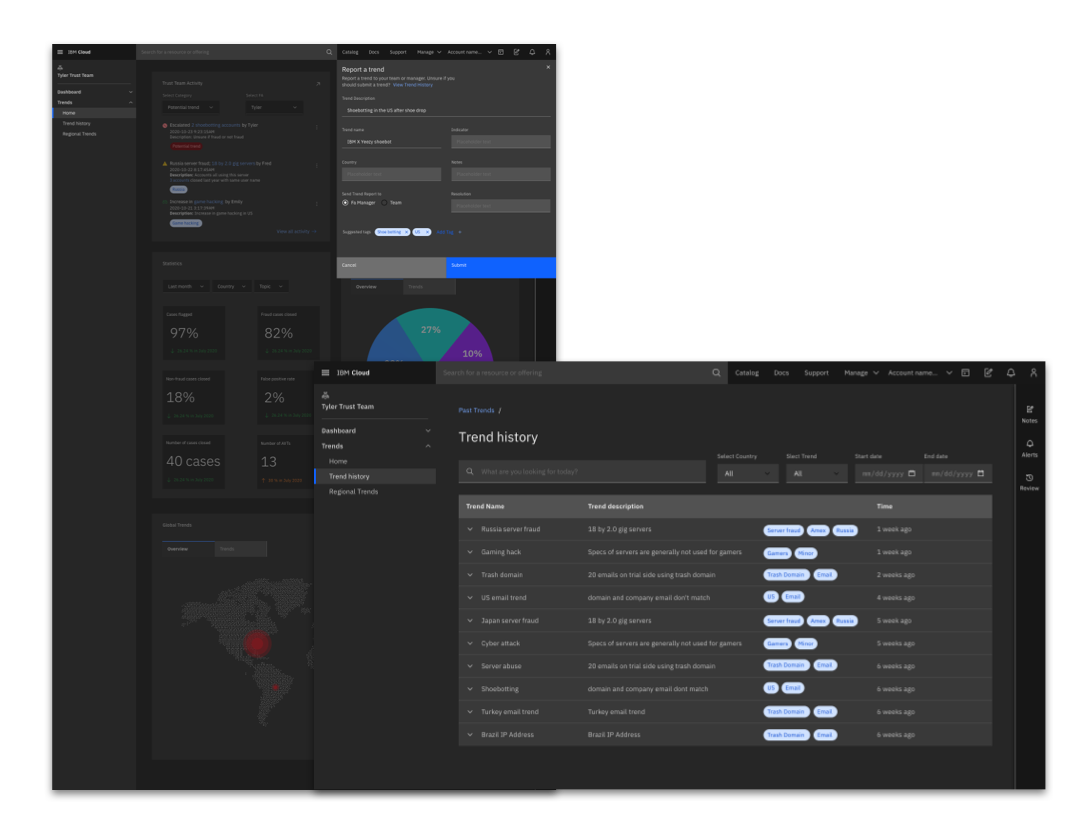

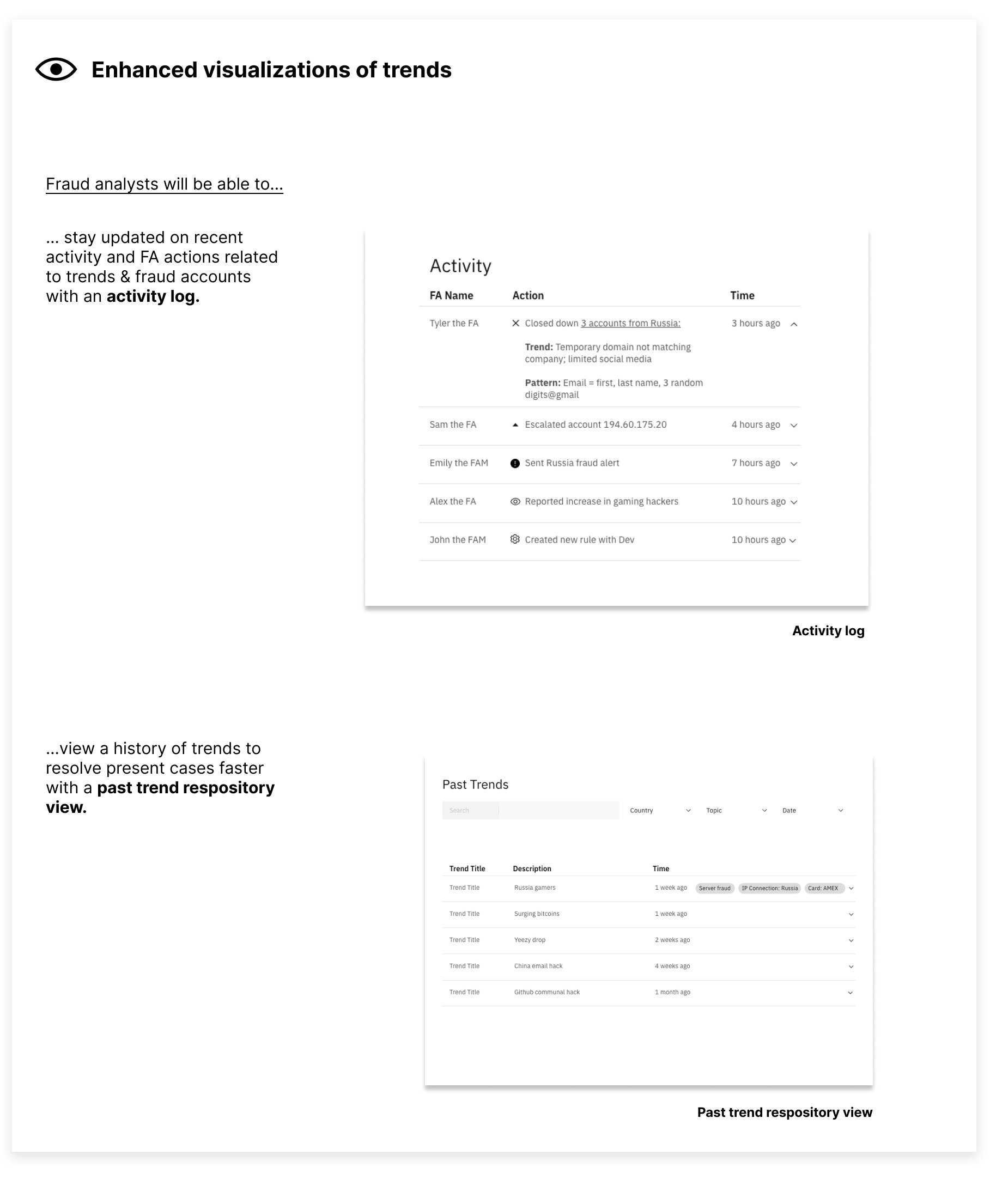

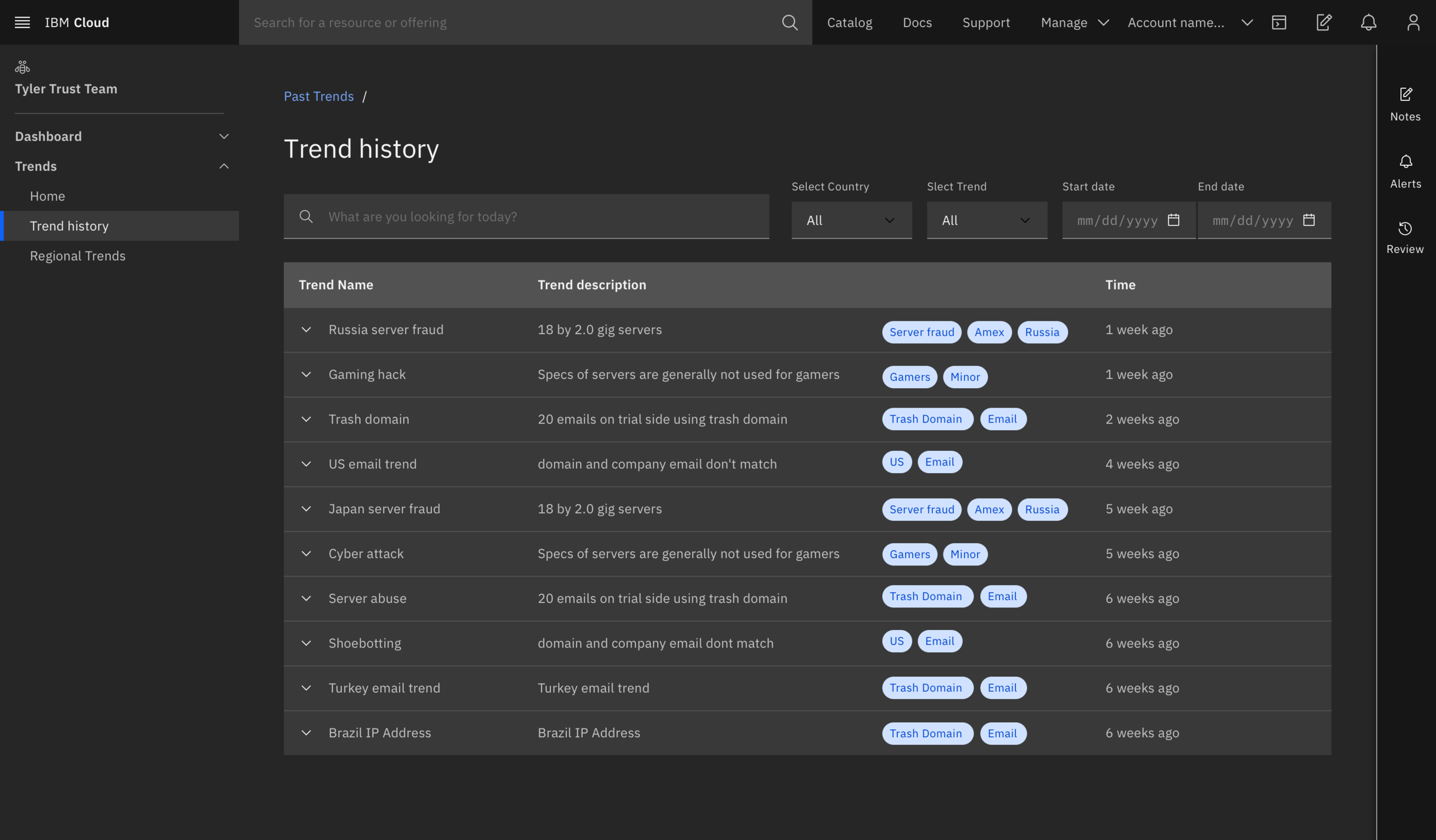

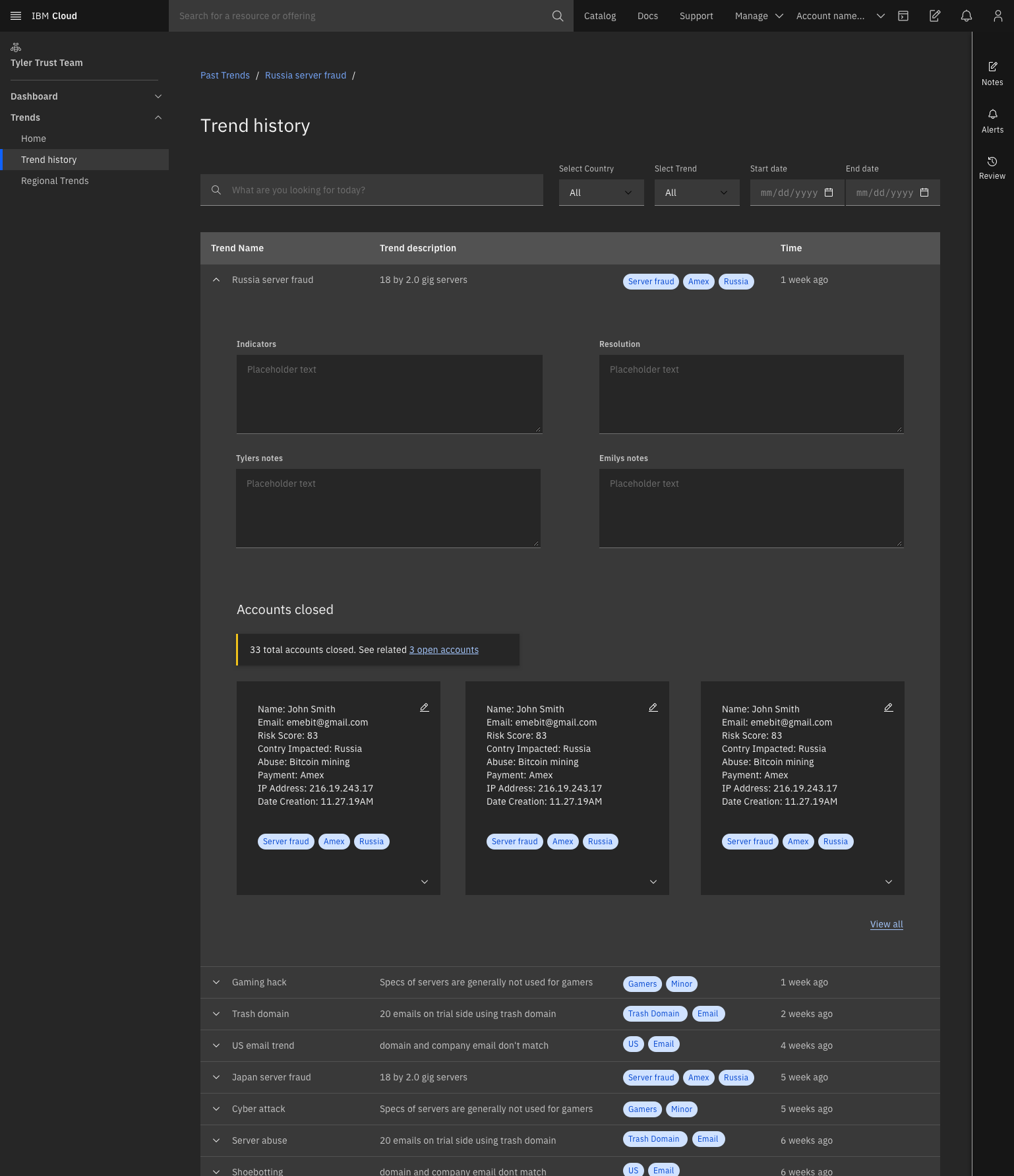

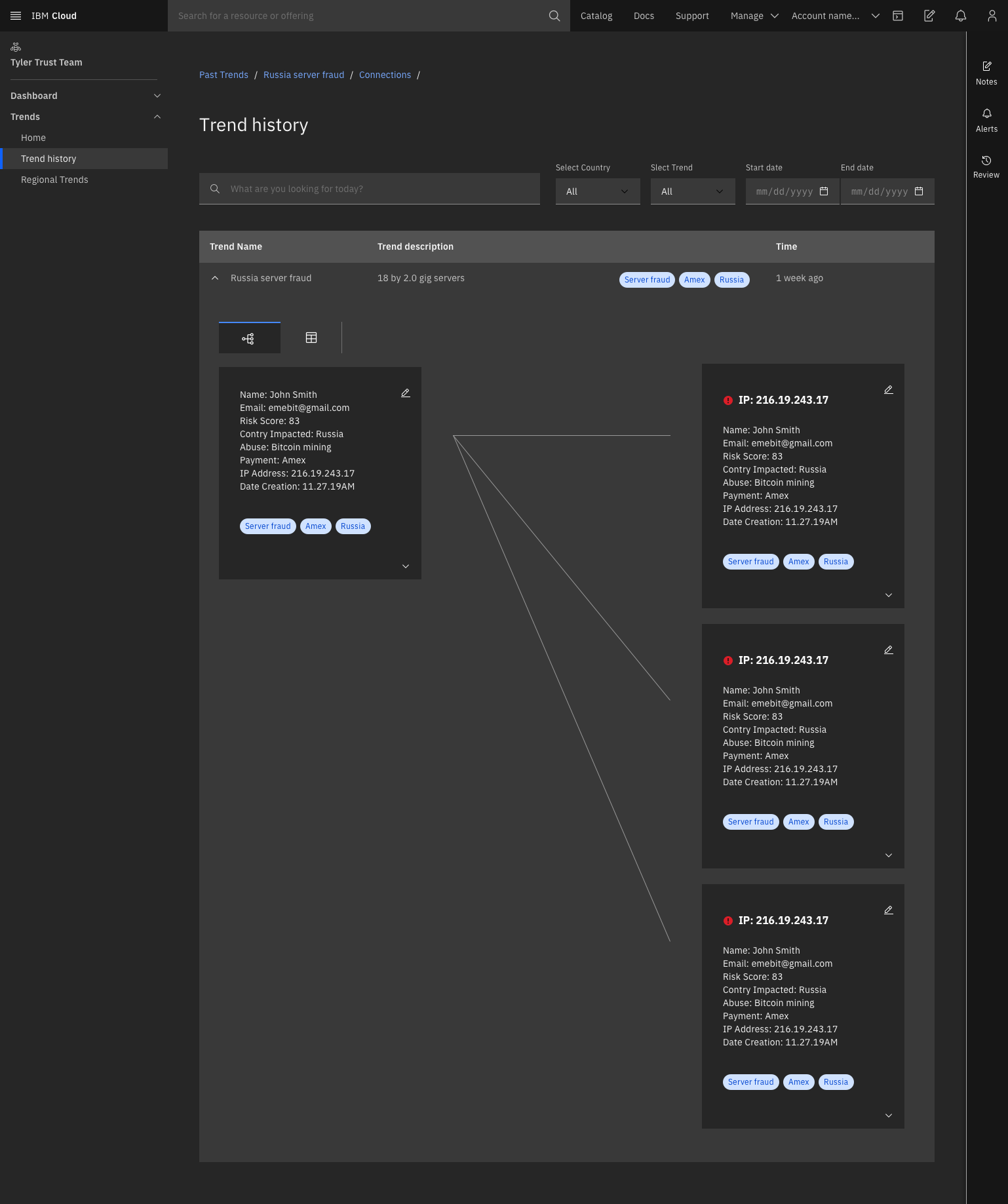

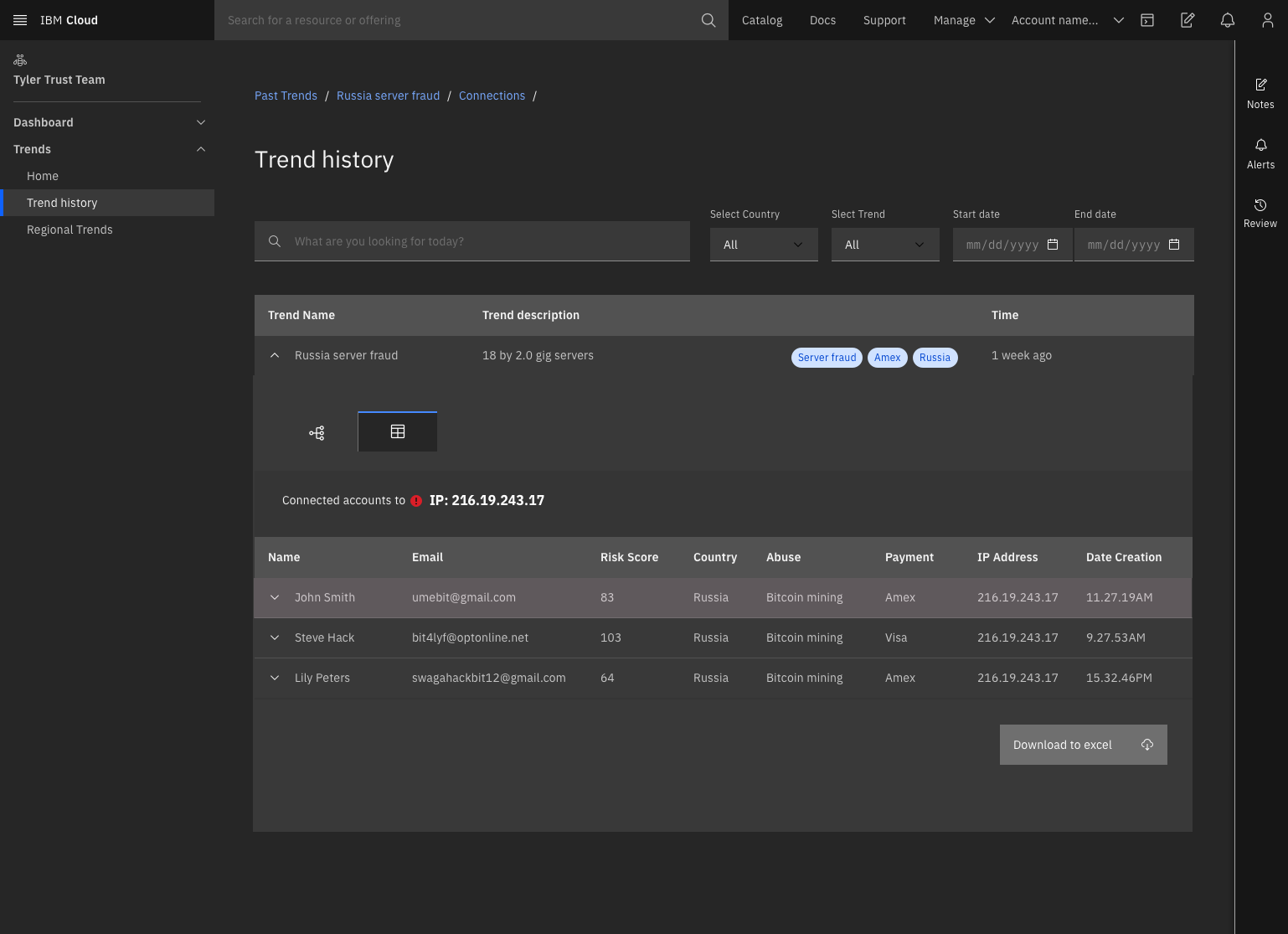

I was responsible for designing the view of the past trend repository and the information hierarchy, which allows fraud analyst to view a history of trends to make more informed decisions on present cases based on how similar cases were handled in the past.

Dashboard architecture

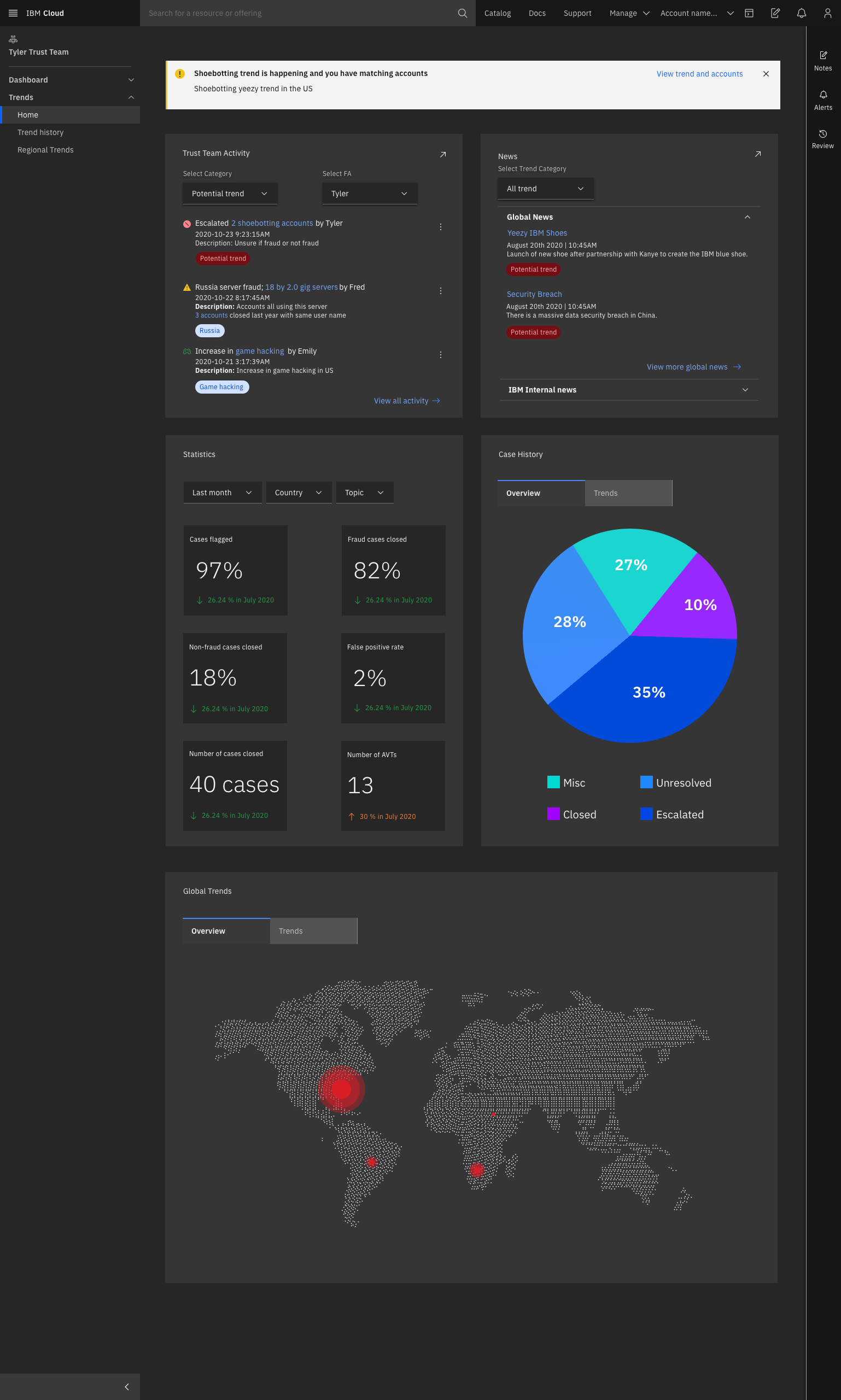

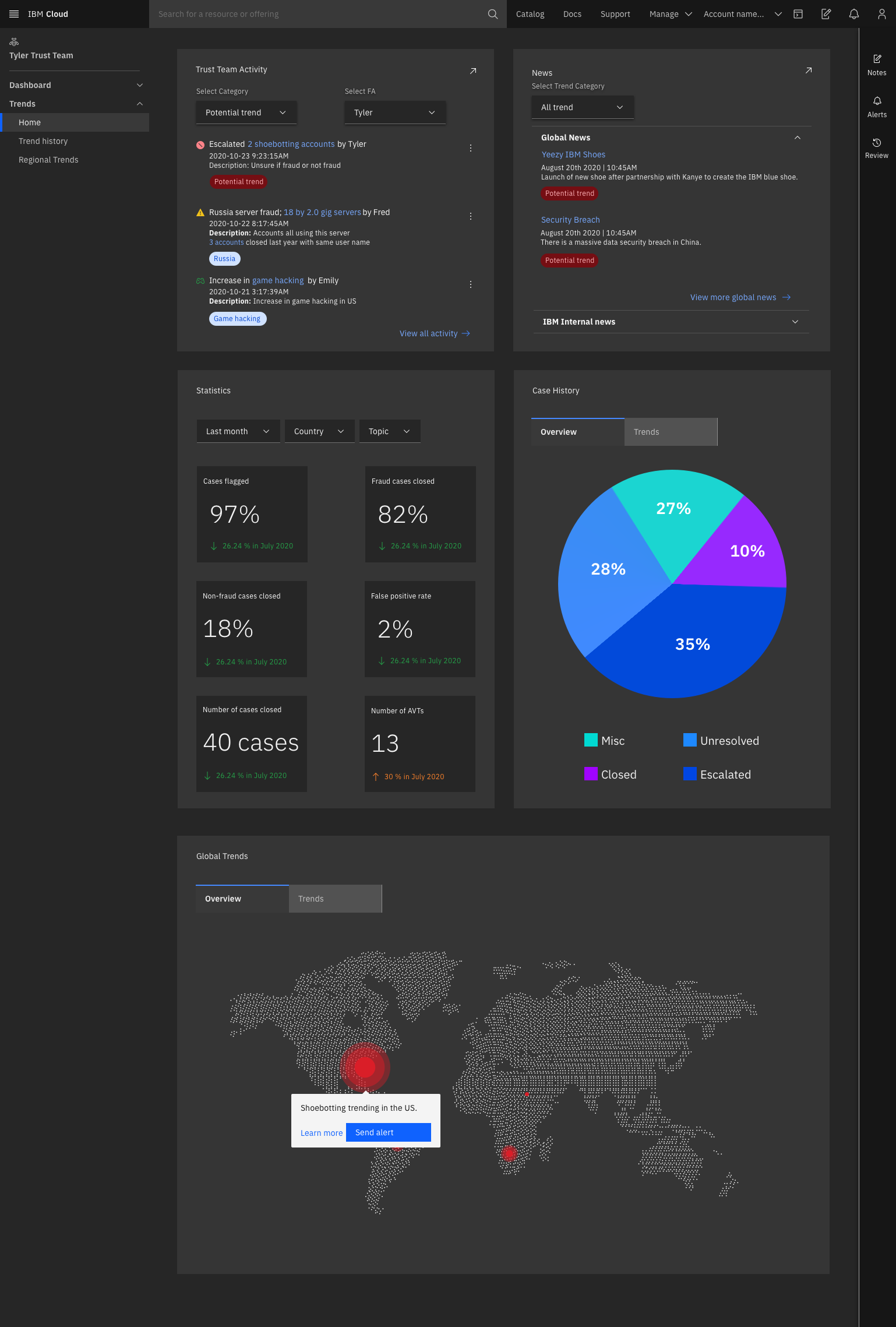

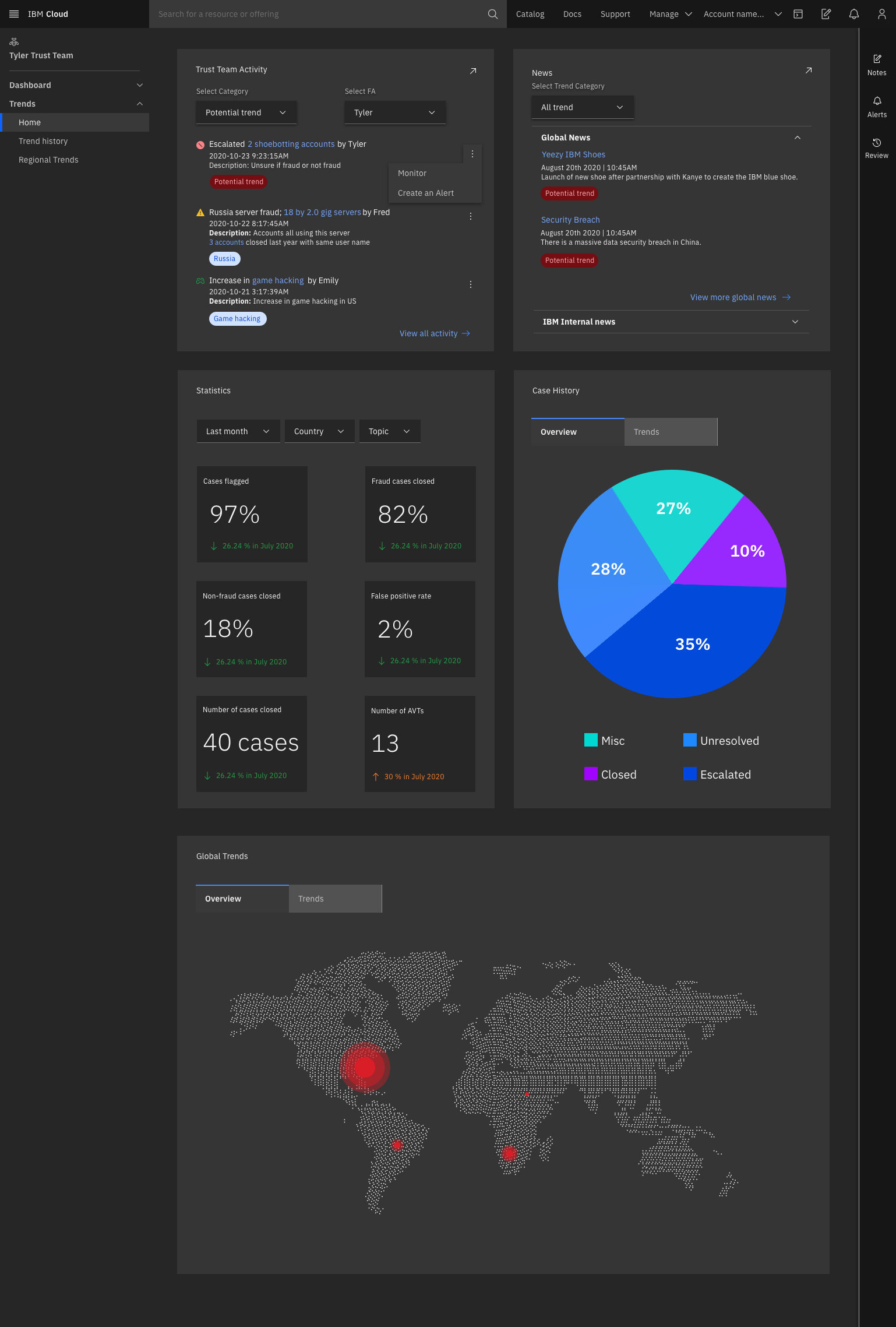

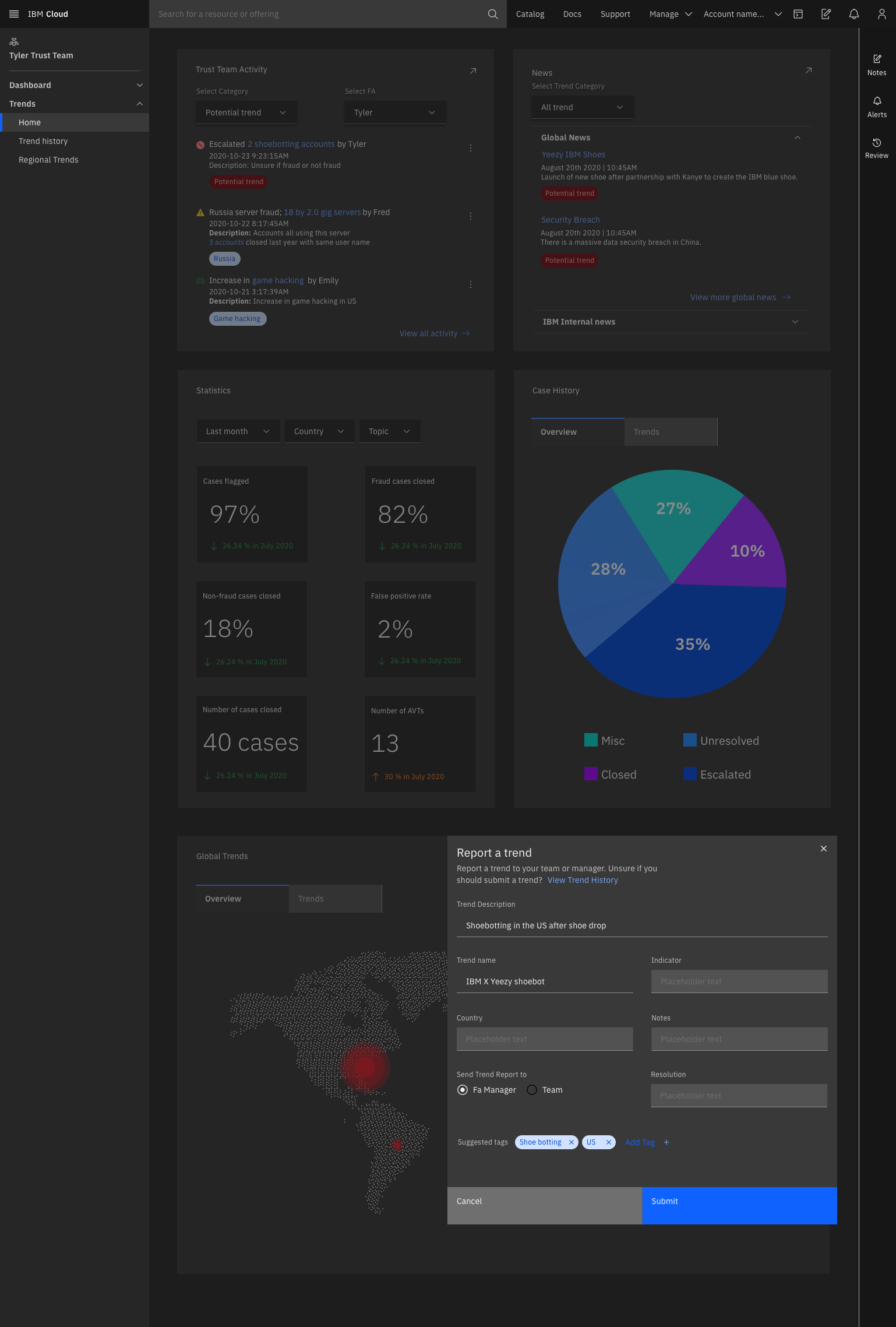

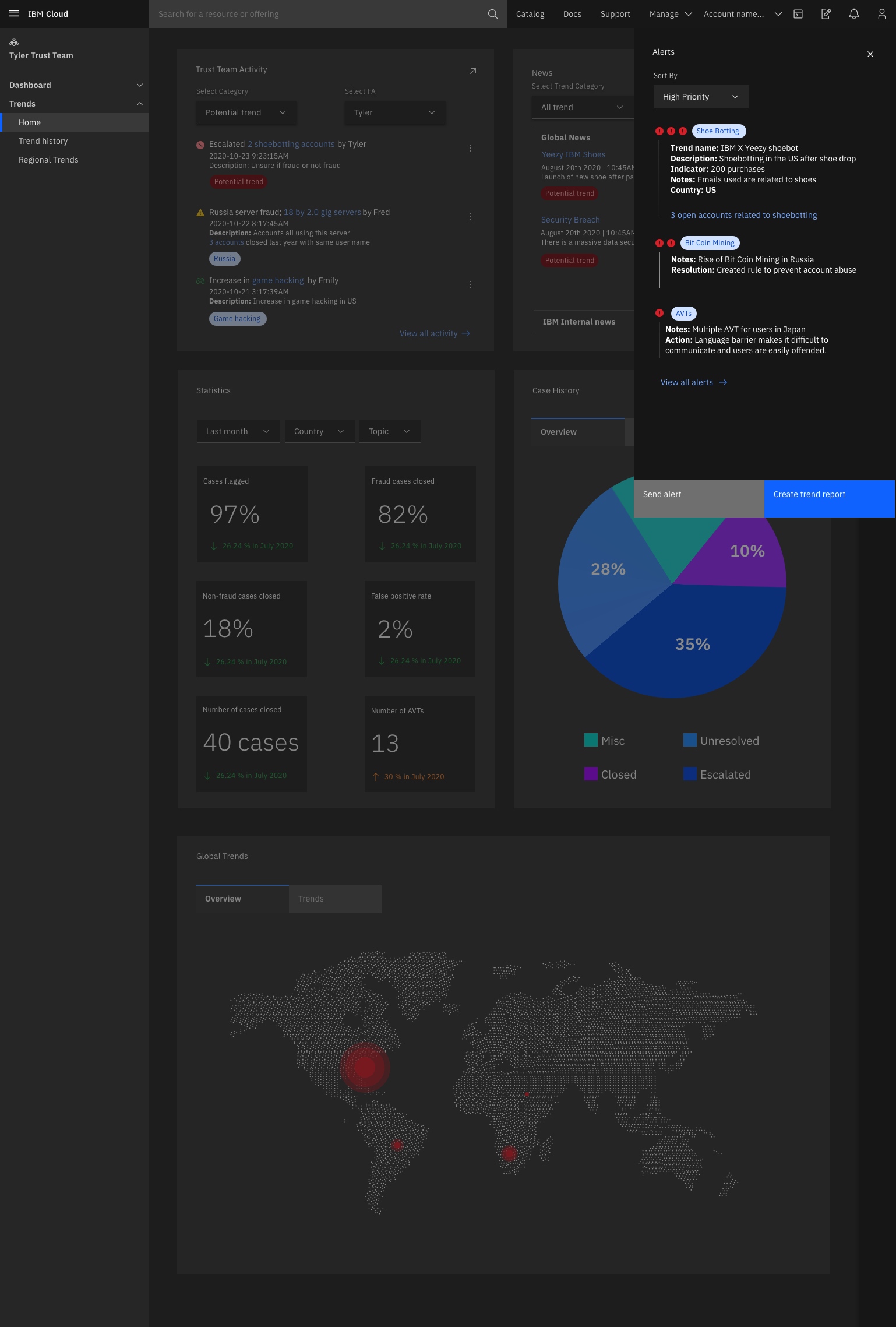

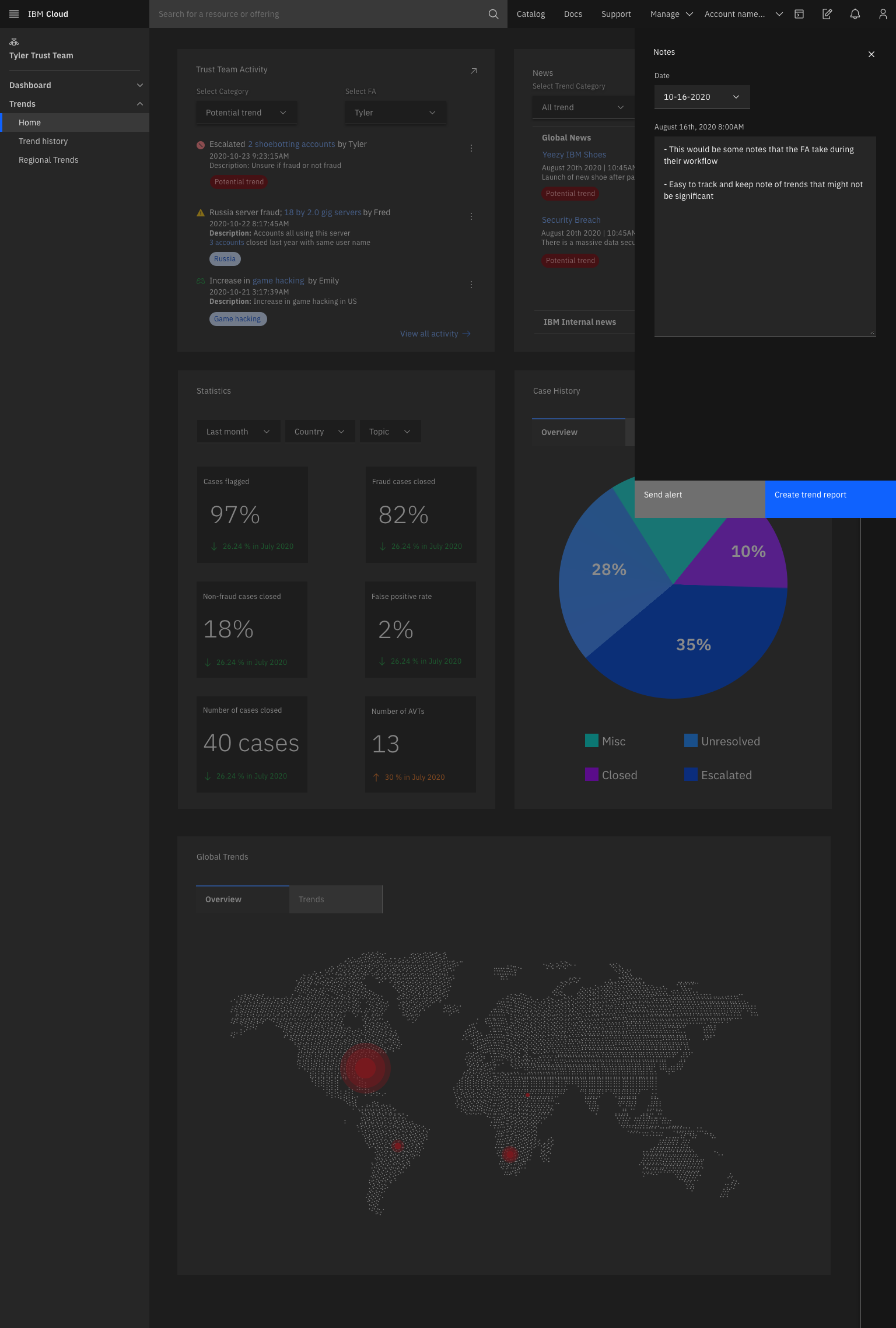

High fidelity designs

Main dashboard

Trend history

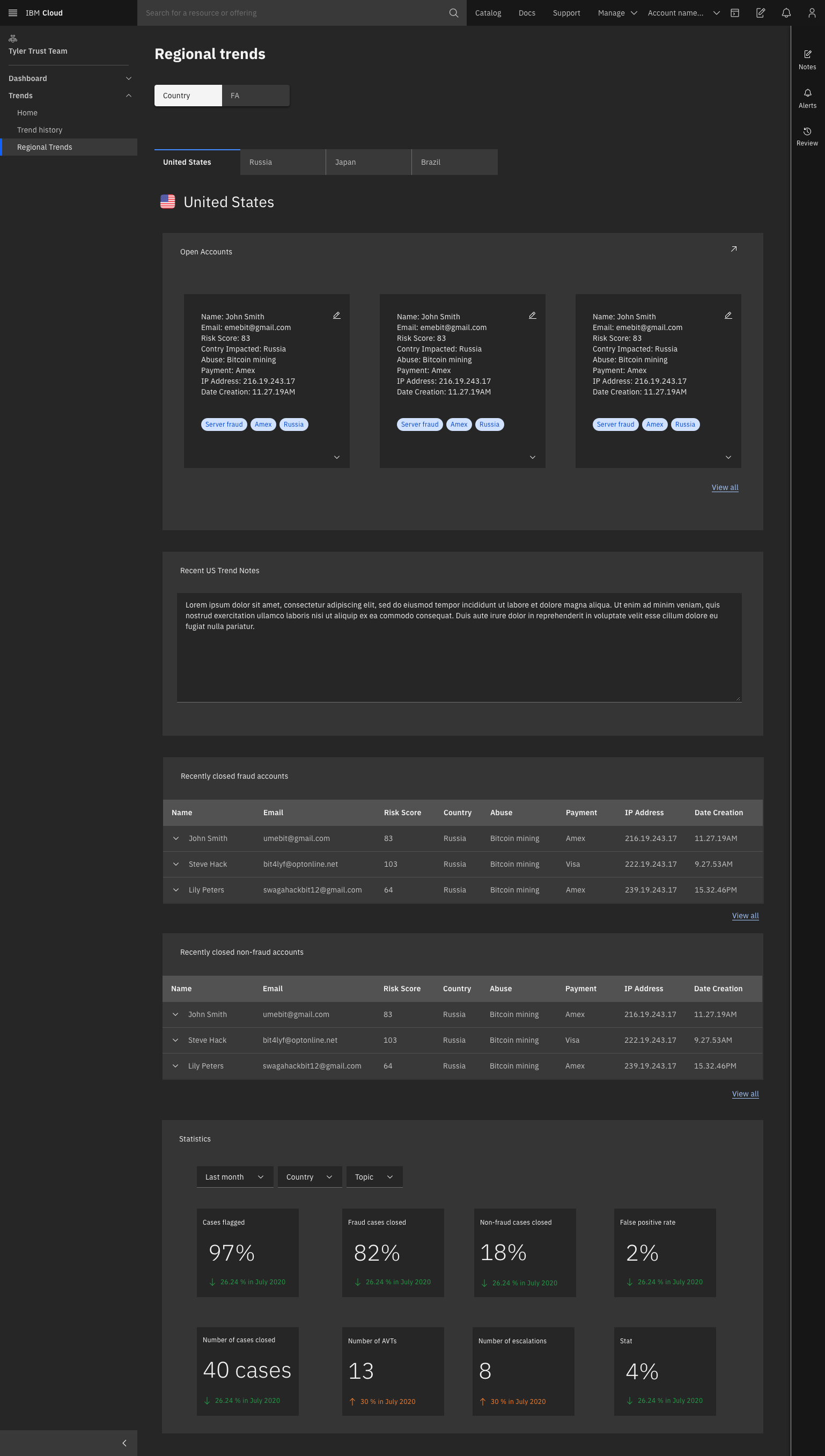

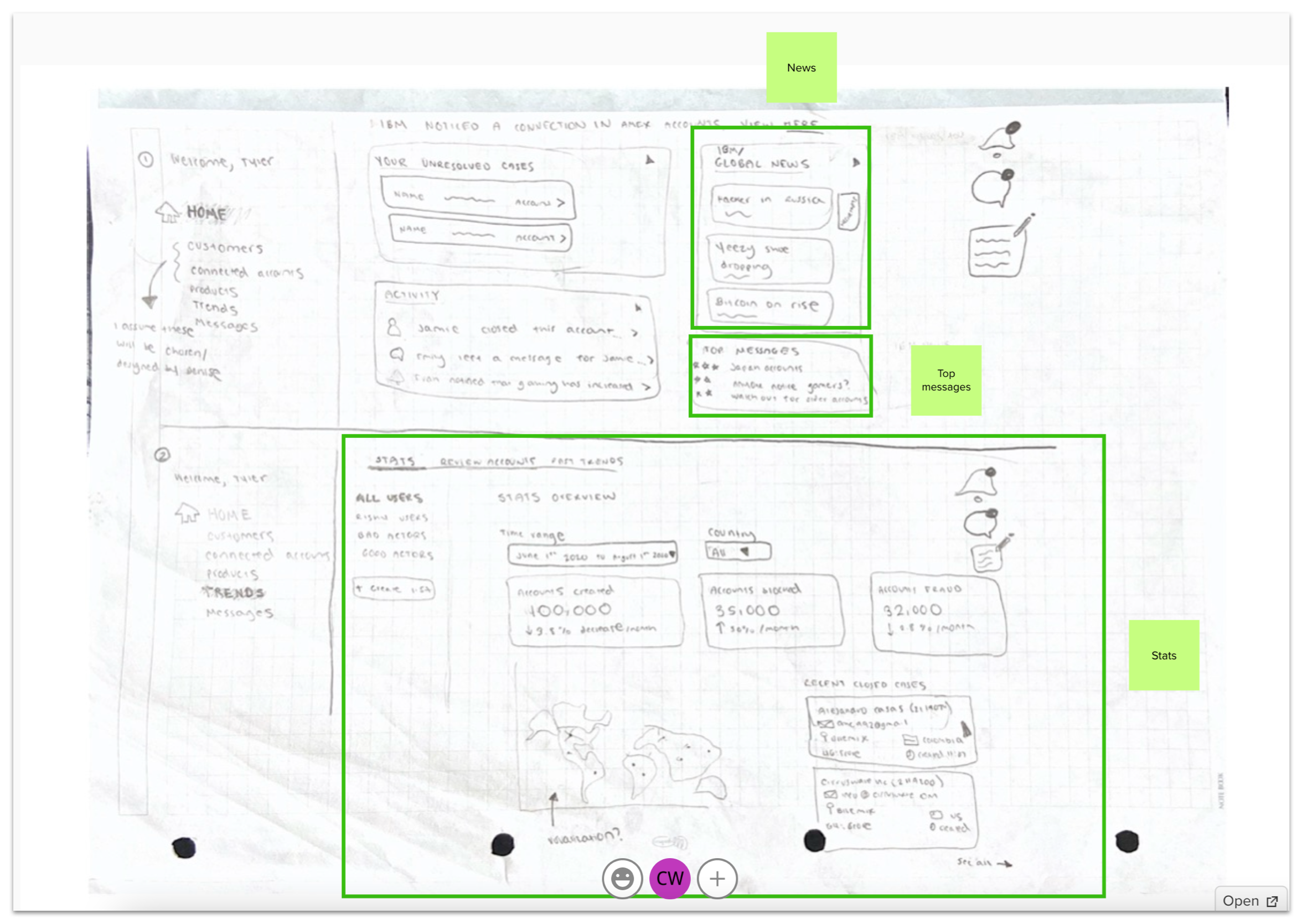

Regional trends

Experience-based roadmap

.

We crafted an experience-based roadmap to align around what the team will deliver to our users. This MVP will serve as a springboard for future iterations as the team tests its efficacy with real users.

Experience-based roadmap workshop results with the stakeholders

Reflection - were we successful?

The cloud team used our research to define their future work and guide prioritization. Six months after our design handoff, the cloud team informed us that they incorporated four of our main design feature proposals into their current work streams (case history, alerting, association of accounts, consistent case reports). They also used our prototype in additional workshops to align their design and development teams.

With regards to our hill statements, the team did not yet have enough users engaging with the product to make conclusions around the KPI metrics. They plan to continue to track fraud account activity as they onboard more internal IBM fraud analysts onto the tool.

We shared our mid-fidelity designs with stakeholders and end users to get feedback, and received positive validation. However, if we had more time, we would have liked to further test concepts and experiences with fraud analysts to address some of our accessibility and usability concerns.

Learnings

Balancing time zones. Two team members were located in India. We learned how to effectively hand off and communicate task expectations despite the time difference and take advantage of our ‘24 hour’ work day. We leveraged slack recaps, morning meeting syncs, and asynchronous video sharing.

The power of storytelling. We shared the IBM Yeezy story in our final presentation to guide our project review and remove the complex technical barrier for our audience (see story above). We received great feedback from our sponsors: “Holy sh*t Y’all killed it. It came so far and was one of the best I’ve ever seen in my 7 years of these.”

Importance of developing a learning model for design challenges. Through this experience, I developed an overview that I call the “4 Cs” to help guide any designer through a project:

Context - what is most important to our users at the time of the experience and what are their goals?

Cases - happy path, edge cases, error states, oh my. Did we consider all of them?

Concept - is there a standard for this design? Are there existing patterns within internal or external products that we can take advantage of?

Content - is this content necessary or can we modify the UI to guide our user? Is it clear? Does it provide enough information in context? Will users know their next steps and have enough details to make informed decisions?